.jpg)

The First Feature Complete Privacy Stack is Here

Alpha is live: a fully feature-complete, privacy-first network. The infrastructure is in place, privacy is native to the protocol, and developers can now build truly private applications.

Nine years ago, we set out to redesign blockchain for privacy. The goal: create a system institutions can adopt while giving users true control of their digital lives. Privacy band-aids are coming to Ethereum (someday), but it’s clear we need privacy now, and there’s an arms race underway to build it. Privacy is complex, it’s not a feature you can bolt-on as an afterthought. It demands a ground-up approach, deep tech stack integration, and complete decentralization.

In November 2025, the Aztec Ignition Chain went live as the first decentralized L2 on Ethereum, it’s the coordination layer that the execution layer sits on top of. The network is not operated by the Aztec Labs or the Aztec Foundation, it’s run by the community, making it the true backbone of Aztec.

With the infrastructure in place and a unanimous community vote, the network enters Alpha.

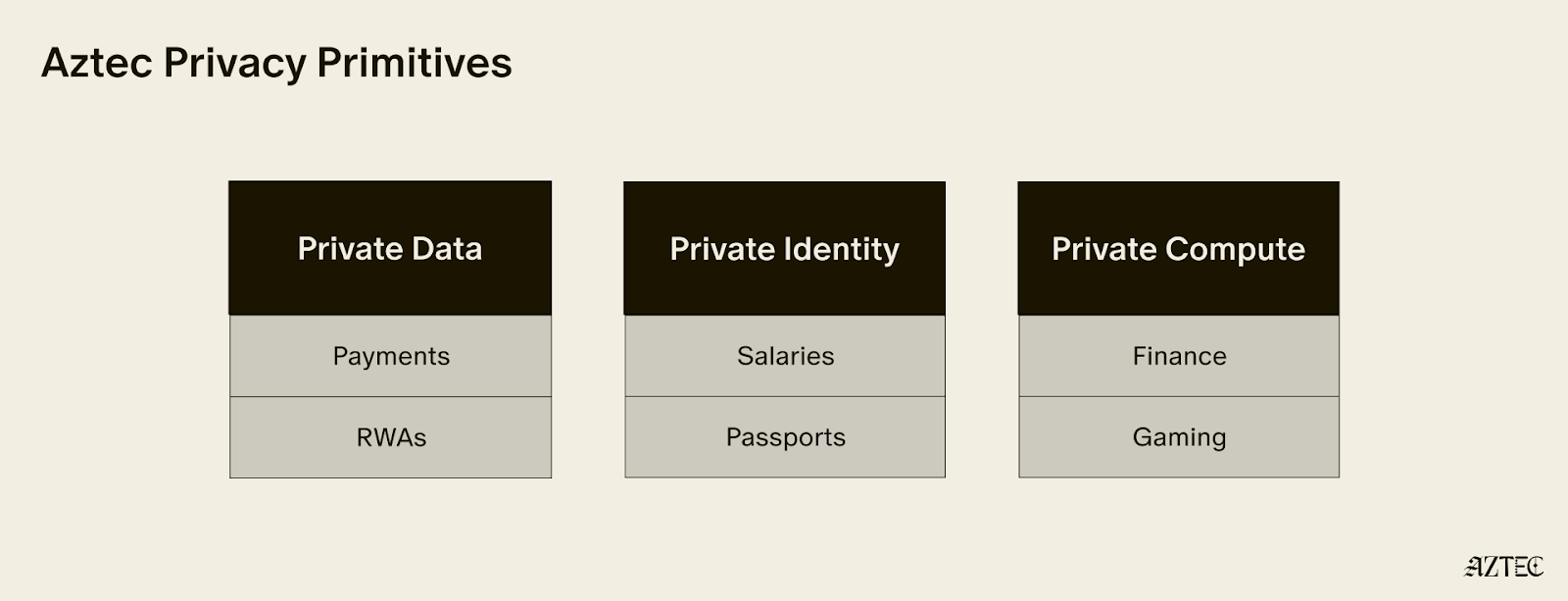

Alpha is the first Layer 2 with a full execution environment for private smart contracts. All accounts, transactions, and the execution itself can be completely private. Developers can now choose what they want public and what they want to keep private while building with the three privacy pillars we have in place across data, identity, and compute.

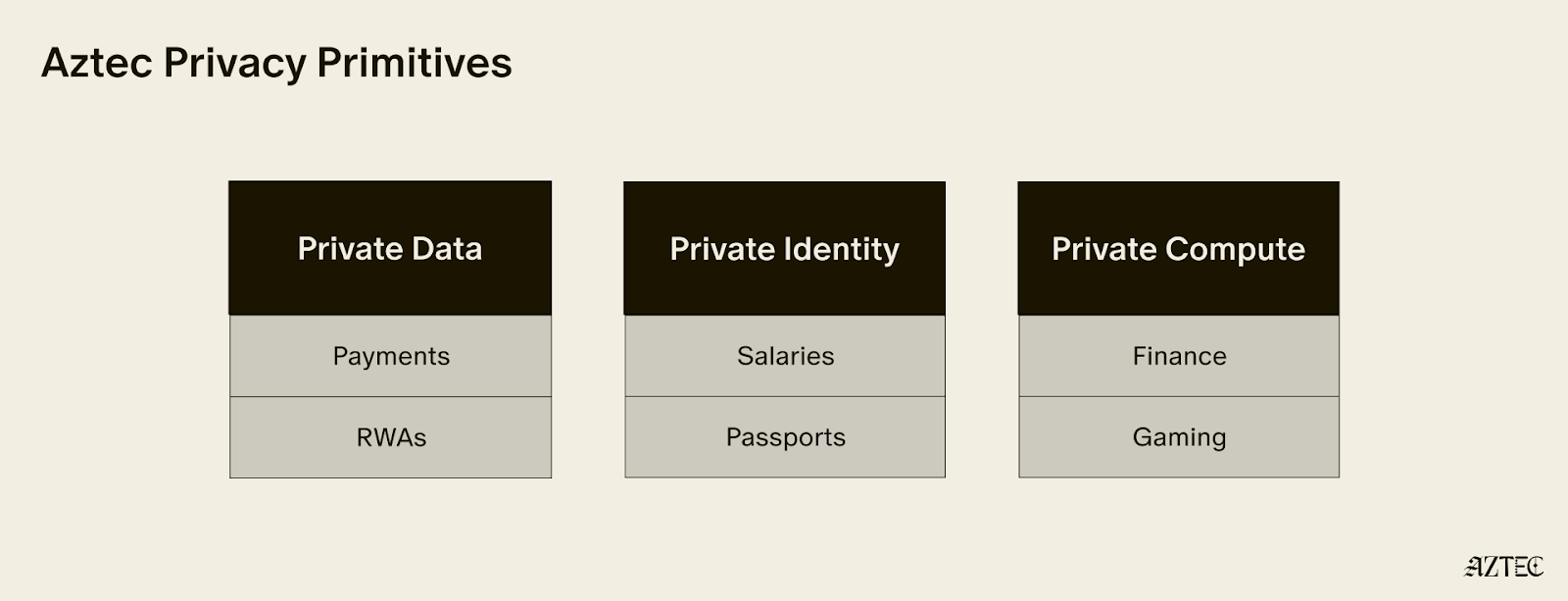

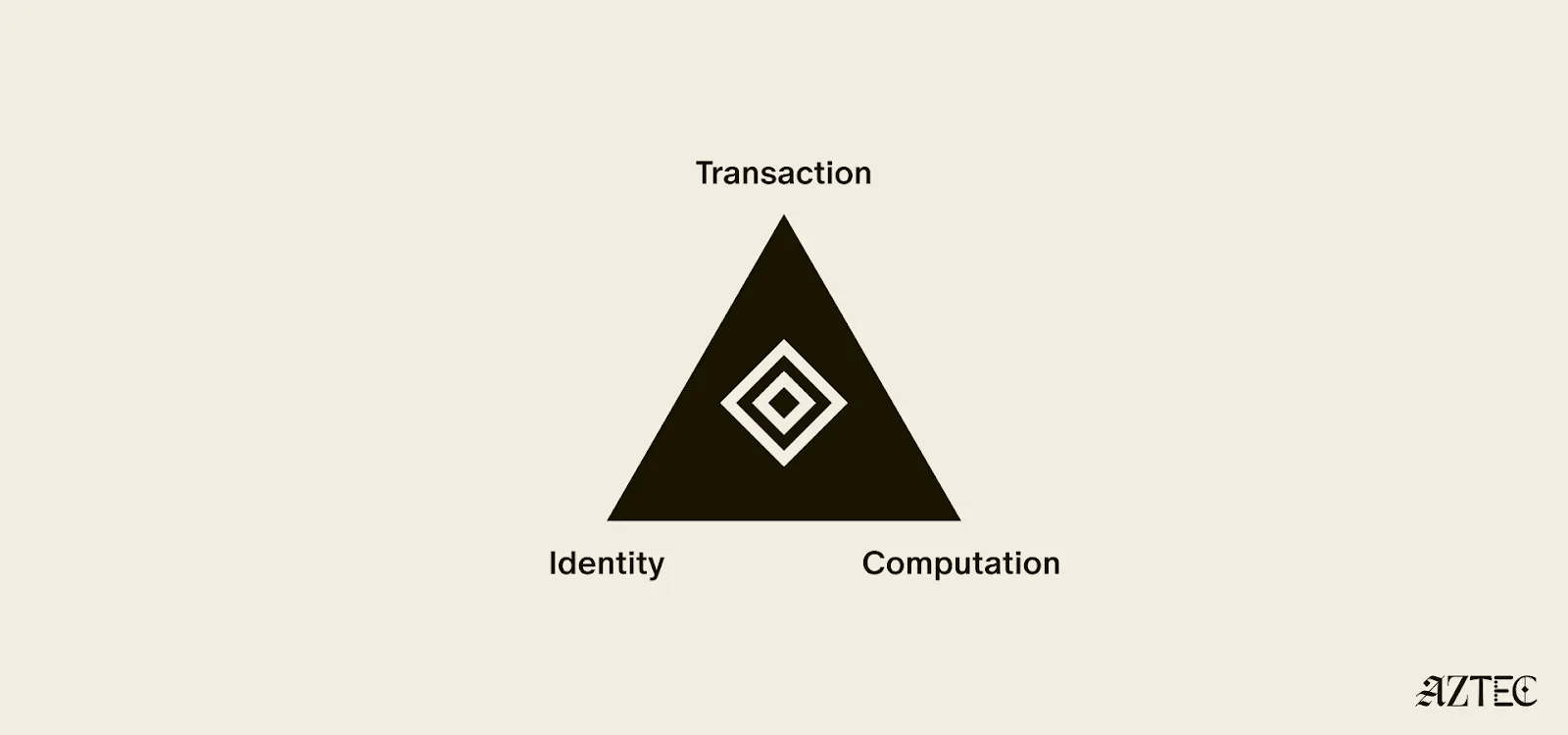

These privacy pillars, which can be used individually or combined, break down into three core layers:

Alpha is feature complete–meaning this is the only full-stack solution for adding privacy to your business or application. You build, and Aztec handles the cryptography under the hood.

It’s Composable. Private-preserving contracts are not isolated; they can talk to each other and seamlessly blend both private and public state across contracts. Privacy can be preserved across contract calls for full callstack privacy.

No backdoor access. Aztec is the only decentralized L2, and is launching as a fully decentralized rollup with a Layer 1 escape hatch.

It’s Compliant. Companies are missing out on the benefits of blockchains because transparent chains expose user data, while private networks protect it, but still offer fully customizable controls. Now they can build compliant apps that move value around the world instantly.

Developers can explore our privacy primitives across data, identity, and compute and start building with them using the documentation here. Note that this is an early version of the network with known vulnerabilities, see this post for details. While this is the first iteration of the network, there will be several upgrades that secure and harden the network on our path to Beta. If you’d like to learn more about how you can integrate privacy into your project, reach out here.

To hear directly from our Cofounders, join our live from Cannes Q&A on Tuesday, March 31st at 9:30 am ET. Follow us on X to get the latest updates from the Aztec Network.

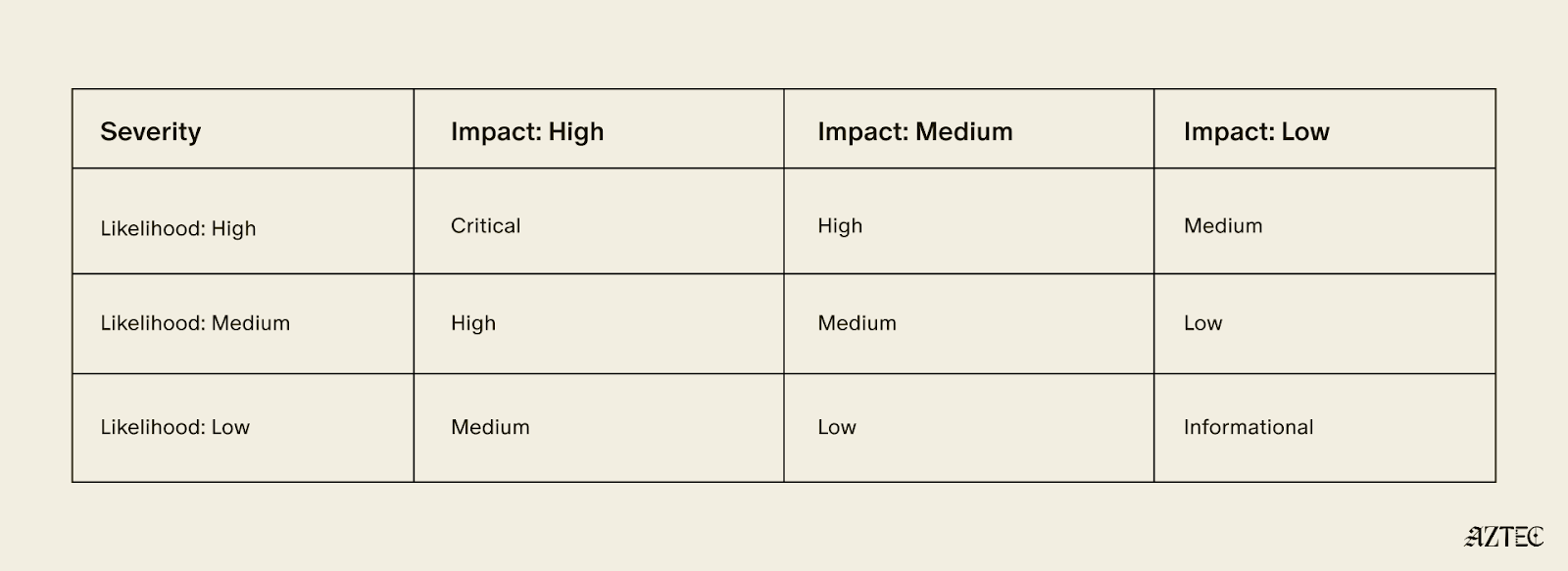

On Wednesday 17 March 2026 our team discovered a new vulnerability in the Aztec Network. Following the analysis, the vulnerability has been confirmed as a critical vulnerability in accordance with our vulnerability matrix.

The vulnerability affects the proving system as a whole, and is not mitigated via public re-execution by the committee of validators. Exploitation can lead to severe disruption of the protocol and theft of user funds.

In accordance with our policy, fixes for the network will be packaged and distributed with the “v5” release of the network, currently planned for July 2026.

The actual bug and corresponding patch will not be publicly disclosed until “v5.”

Aztec applications and portals bridging assets from Layer 1s should warn users about the security guarantees of Alpha, in particular, reminding users not to put in funds they are not willing to lose. Portals or applications may add additional security measures or training wheels specific to their application or use case.

We will shortly establish a bug tracker to show the number and severity of bugs known to us in v4. The tracker will be updated as audits and security researchers discover issues. Each new alpha release will get its own tracker. This will allow developers and users to judge for themselves how they are willing to use the network, and we will use the tracker as a primary determinant for whether the network is ready for a "Beta" label.

We have identified a vulnerability in barretenberg allowing inclusion of incorrect proofs in the Aztec Network mempool, and ask all nodes to upgrade to versions v.4.1.2 or later.

We’d like to thank Consensys Diligence & TU Vienna for a recent discovery of a separate vulnerability in barretenberg categorized as medium for the network and critical for Noir:

We have published a fixed version of barretenberg.

We’d also like to thank Plainshift AI for discovery, reproduction, and reporting of one more vulnerability in the Aztec Network and their ongoing work to help secure the network.

Decentralization is not just a technical property of the Aztec Network, it is the governing principle.

No single team, company, or individual controls how the network evolves. Upgrades are proposed in public, debated in the open, and approved by the people running the network. Decentralized sequencing, proving, and governance are hard-coded into the base protocol so that no central actor can unilaterally change the rules, censor transactions, or appropriate user value.

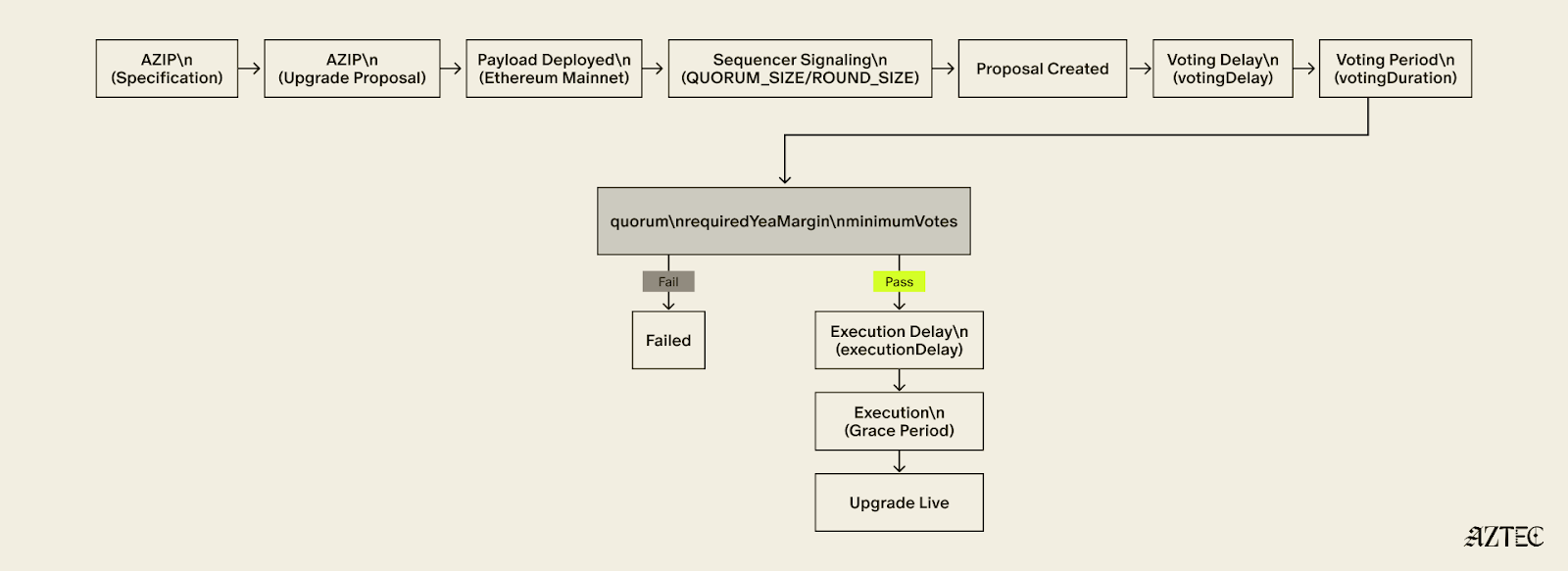

The governance framework that makes this possible has three moving parts: Aztec Improvement Proposal (AZIP), Aztec Upgrade Proposal (AZUP), and the onchain vote. Together, they form a pipeline that takes an idea to a live protocol change, with multiple independent checkpoints along the way.

Every upgrade starts with an AZIP. AZIPs are version-controlled design documents, publicly maintained on GitHub, modeled on the same EIP process that has governed Ethereum since its earliest days. Anyone is encouraged to suggest improvements to the Aztec Network protocol spec.

Before a formal proposal is opened, ideas live in GitHub Discussions, an open forum where the community can weigh in, challenge assumptions, and shape the direction of a proposal before it hardens into a spec. This is the virtual town square: the place where the network's future gets debated in public, not decided behind closed doors.

The AZIP framework is what decentralization looks like in practice. Multiple ideas can surface simultaneously, get stress-tested by the community, and the strongest ones naturally rise. Good arguments win, not titles or seniority. The process selects for quality discussion precisely because anyone can participate and everything is visible.

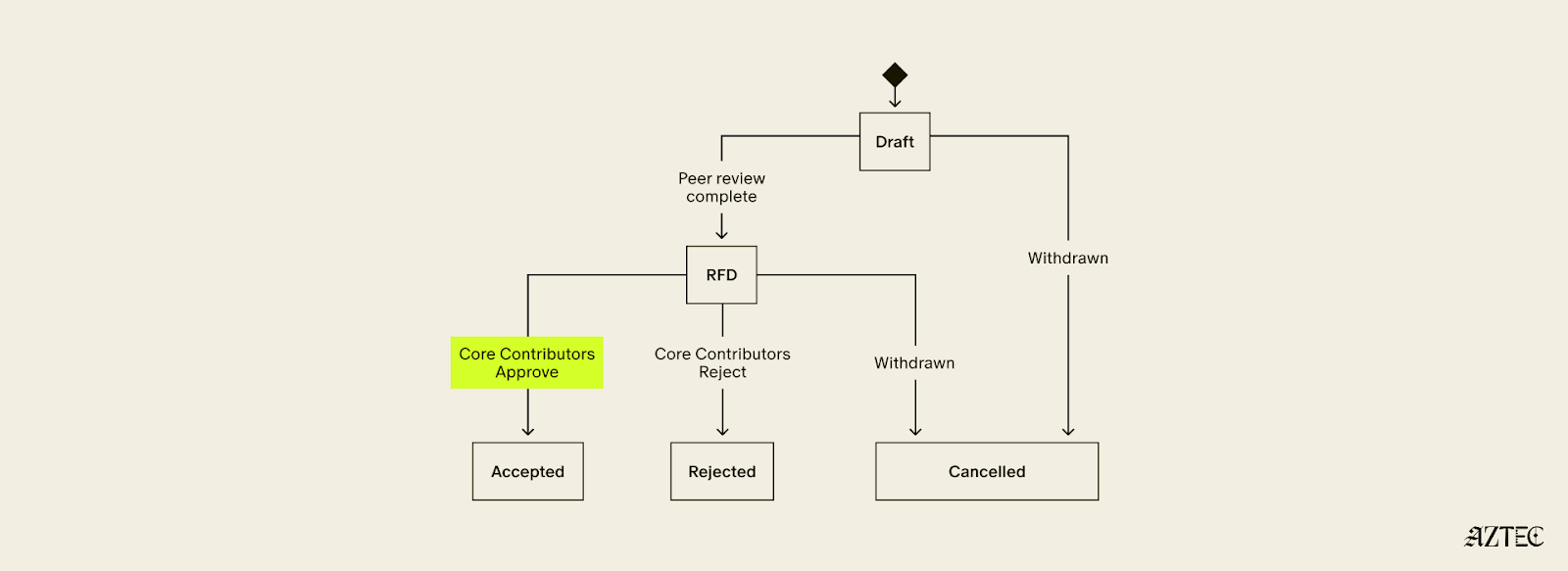

Once an AZIP is formalized as a pull request, it enters a structured lifecycle: Draft, Ready for Discussion, then Accepted or Rejected. Rejected AZIPs are not deleted — they remain permanently in the repository as a record of what was tried and why it was rejected. Nothing gets quietly buried.

Security Considerations are mandatory for all Core, Standard, and Economics AZIPs. Proposals without them cannot pass the Draft stage. Security is structural, not an afterthought.

Once Core Contributors, a merit-based and informal group of active protocol contributors, have reviewed an AZIP and approved it for inclusion, it gets bundled into an AZUP.

An AZUP takes everything an AZIP described and deploys it — a real smart contract, real onchain actions. Each AZUP includes a payload that encodes the exact onchain changes that will occur if the upgrade is approved. Anyone can inspect the payload on a block explorer and see precisely what will change before voting begins.

The payload then goes to sequencers for signaling. Sequencers are the backbone of the network. They propose blocks, attest to state, and serve as the first governance gate for any upgrade. A payload must accumulate enough signals from sequencers within a fixed round to advance. The people actually running the network have to express coordinated support before any change reaches a broader vote.

Once sequencers signal quorum, the proposal moves to tokenholders. Sequencers' staked voting power defaults to "yea" on proposals that came through the signaling path, meaning opposition must be active, not passive. Any sequencer or tokenholder who wants to vote against a proposal must explicitly re-delegate their stake before the voting snapshot is taken. The system rewards genuine engagement from all sides.

For a proposal to pass, it must meet quorum, a supermajority margin, and a minimum participation threshold, all three. If any condition is unmet, the proposal fails.

Even after a proposal passes, it does not execute immediately. A mandatory delay gives node operators time to deploy updated software, allows the community to perform final checks, and reduces the risk of sudden uncoordinated changes hitting the network. If the proposal is not executed within its grace period, it expires.

Failed AZUPs cannot be resubmitted. A new proposal must be created that directly addresses the feedback received. There is no way to simply retry and hope for a different result.

The teams building the network have no special governance power. Sequencers, tokenholders, and Core Contributors are the governing actors, each playing a distinct and non-redundant role.

No single party can force or block an upgrade. Sequencers can withhold signals. Tokenholders can vote nay. Proposals not executed within the grace period expire on their own.

This is decentralization working as intended. The network upgrades not because a team decides it should, but because the people running it agree that it should.

If you want to help shape what Aztec becomes, the forum is open. The proposals are public. The town square is yours.

Follow Aztec on X to stay up to date on the latest developments.

Aztec is novel code — the bleeding edge of cryptography and blockchain technology. As the first decentralized L2 on Ethereum, Aztec is powered by a global network of sequencers and provers. Decentralization introduces some novel challenges in how security is addressed; there is no centralized sequencer to pause or a centralized entity who has power over the network. The rollout of the network reflects this, with distinct goals at each phase.

Ignition

Validate governance and decentralized block building work as intended on Ethereum Mainnet.

Alpha

Enable transactions at 1TPS, ~6s block times and improve the security of the network via continual ongoing audits and bug bounty. New releases of the alpha network are expected regularly to address any security vulnerabilities. Please note, every alpha deployment is distinct and state is not migrated between Alpha releases.

Beta

We will transition to Beta once the network scales to >10 TPS, with reduced block times while ensuring 99.9% uptime. Additionally, the transition requires no critical bugs disclosed via bug bounty in 3 months. State migrations across network releases can be considered.

TL;DR: The roadmap from Ignition to Alpha to Beta is designed to reflect the core team's growing confidence in the network's security.

This phased approach lets us balance ecosystem growth while building security confidence and steadily expanding the community of researchers and tools working to validate the network’s security, soundness and correctness.

.png)

Ultimately, time in production without an exploit is the most reliable indicator of how secure a codebase is.

At the start of Alpha, that confidence is still developing. The core team believes the network is secure enough to support early ecosystem use cases and handle small amounts of value. However this is experimental alpha software and users should not deposit more value than they are willing to lose. Apps may choose to limit deposit amounts to mitigate risk for users.

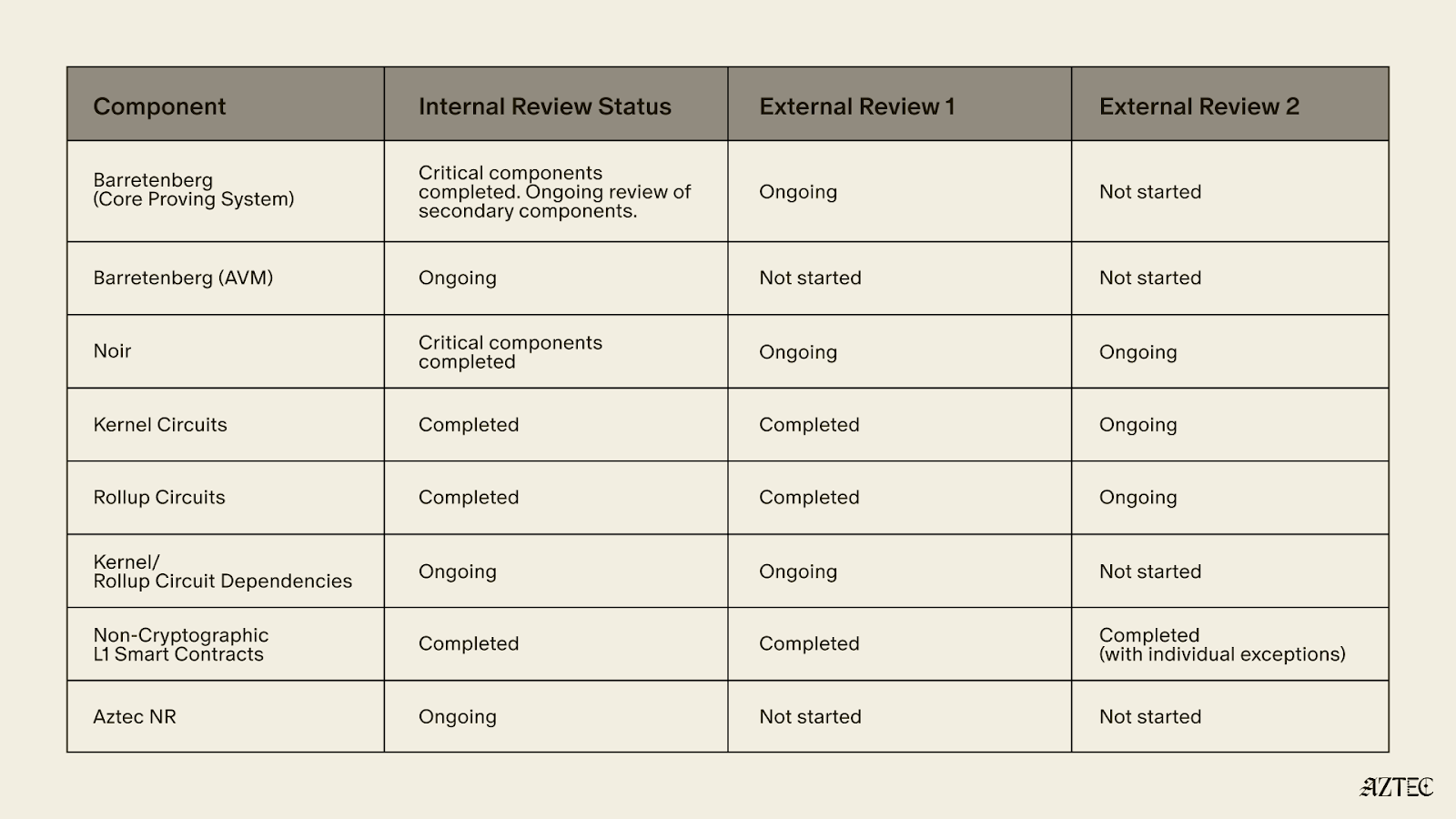

Audits are ongoing throughout Alpha, with the goal to achieve dual external audits across the entire codebase.

The table below shows current security and audit coverage at the time of writing.

The main bug bounty for the network is not yet live, other than for the non-cryptographic L1 smart contracts as audits are ongoing. We encourage security researchers to responsibly disclose findings in line with our security policy .

As the audits are still ongoing, we expect to discover vulnerabilities in various components. The fixes will be packaged and distributed with the “v5” release.

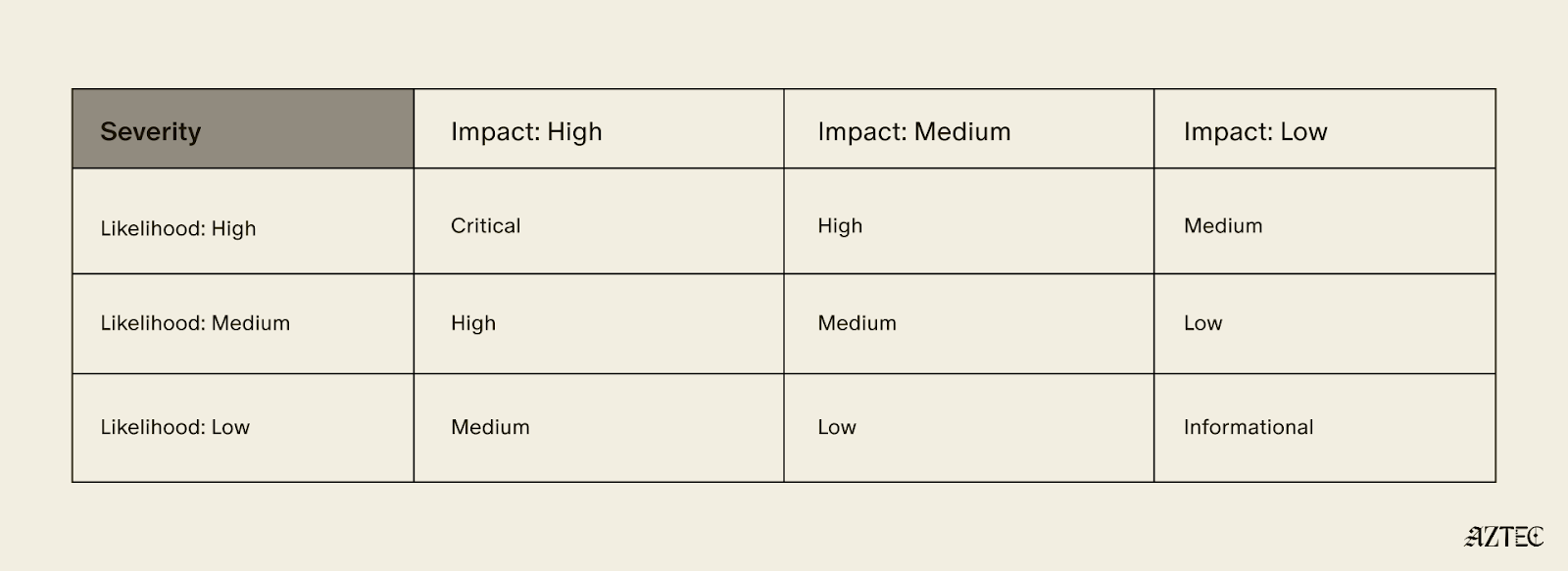

If we discover a Critical vulnerability in “v4” in accordance with the following severity matrix, which would require the change of verification keys to fix, we will first alert the portal operators to pause deposits and then post a message on the forum, stating that the rollup has a vulnerability.

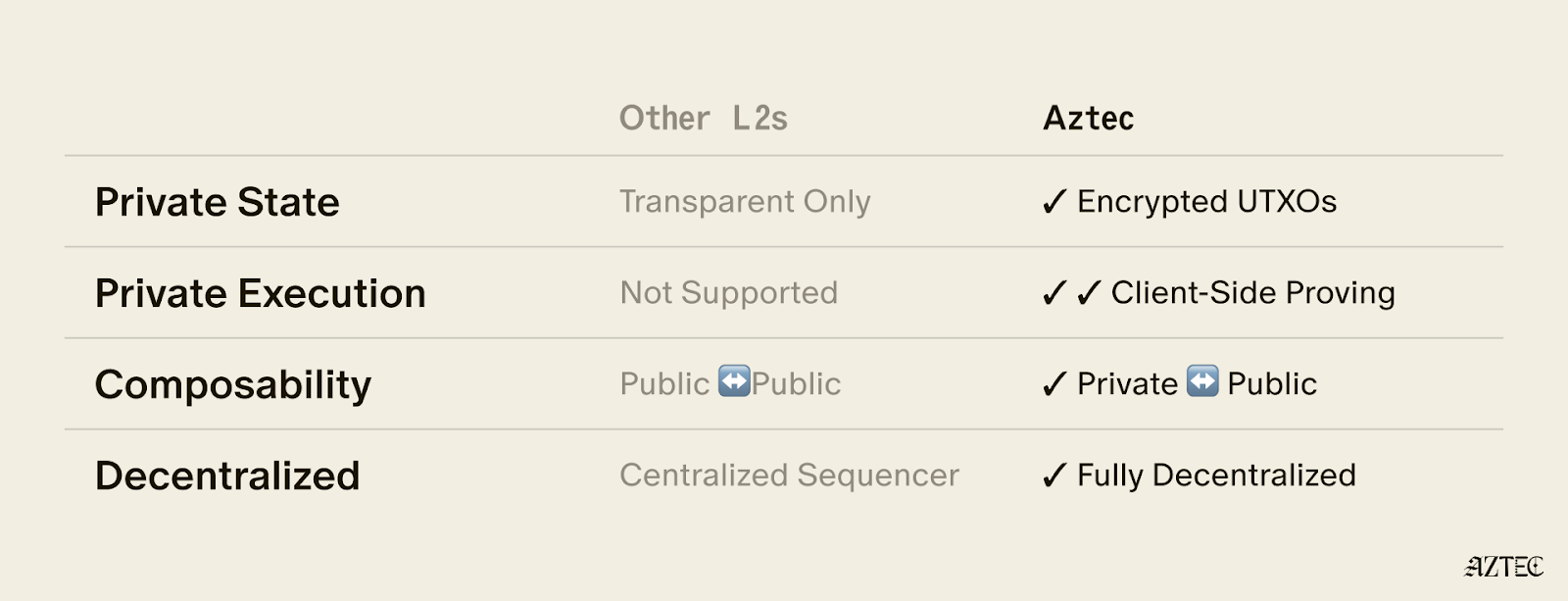

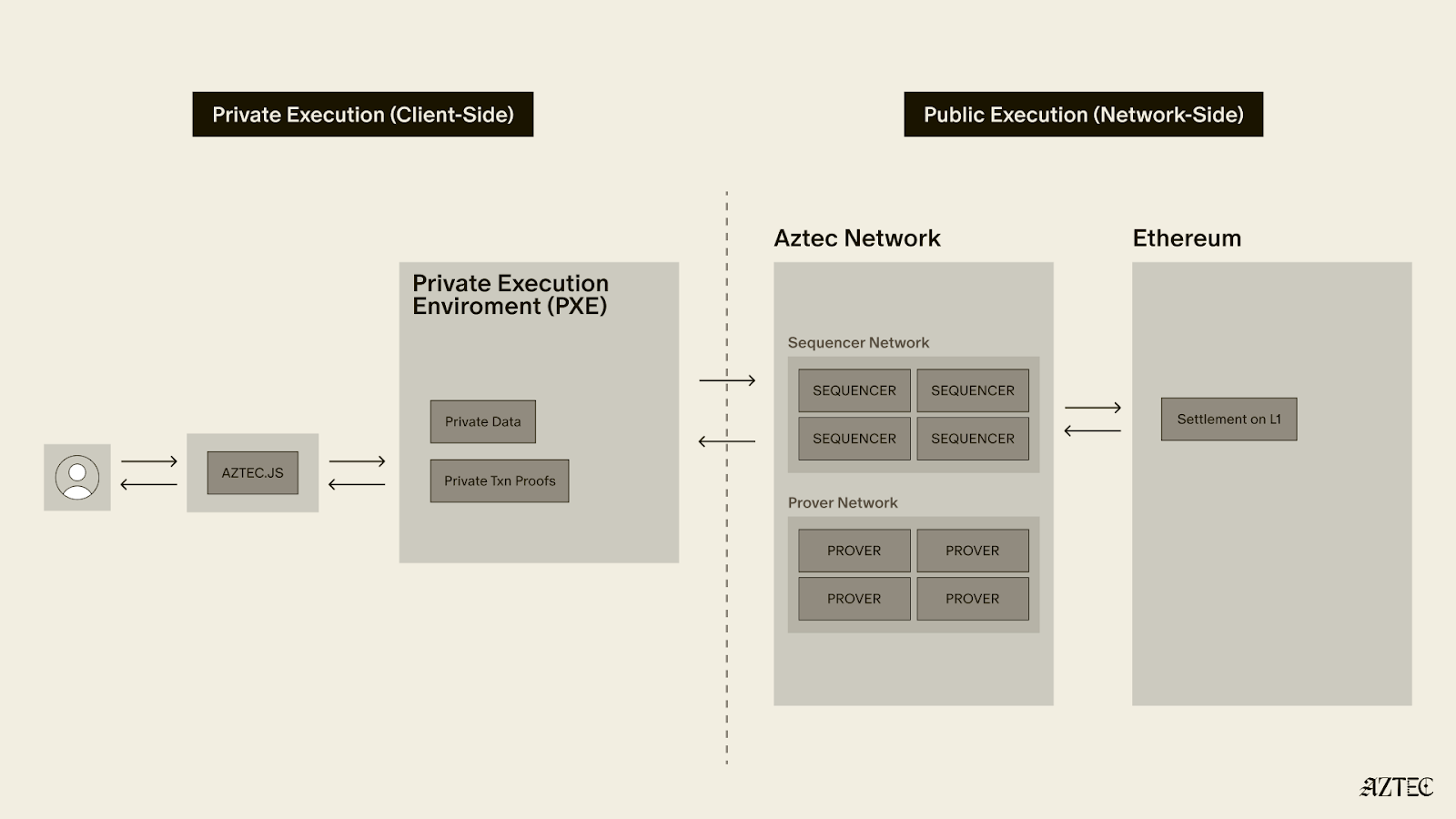

Aztec uses a hybrid execution model, handling private and public execution separately — and the security considerations differ between them.

As per the audit table above, it is clear that the Aztec Virtual Machine (AVM) has not yet completed its internal and external audits. This is intentional as all AVM execution is public, which allows it to benefit from a “Training Wheel” — the validator re-execution committee.

Every 72 seconds, a collection of newly proposed Aztec blocks are bundled into a "checkpoint" and submitted to L1. With each proposed checkpoint, a committee of 48 staking validators randomly selected from the entire set of validators (presently 3,959) re-execute all txs of all blocks in the checkpoint, and attest to the resulting state roots. 33 out of 48 attestations are required for the checkpoint proposal to be considered valid. The committee and the eventual zk proof must agree on the resultant state root for a checkpoint to be added to the proven chain. As a result, an attacker must control 33/48 of any given committee to exploit any bug in the AVM.

The only time the re-execution committee is not active is during the escape hatch, where the cost to propose a block is set at a level which attempts to quantify the security of the execution training wheel. For this version of the alpha network, this is set a 332M AZTEC, a figure intended to approximate the economic protection the committee normally provides, equivalent to roughly 19% of the un-staked circulating supply at the time of writing. Since the Aztec Foundation holds a significant portion of that supply, the effective threshold is considerably higher in practice.

A key design assumption is that just-in-time bribery of the sequencer committee is impractical and the only ****realistic attack vector is stake acquisition, not bribery.

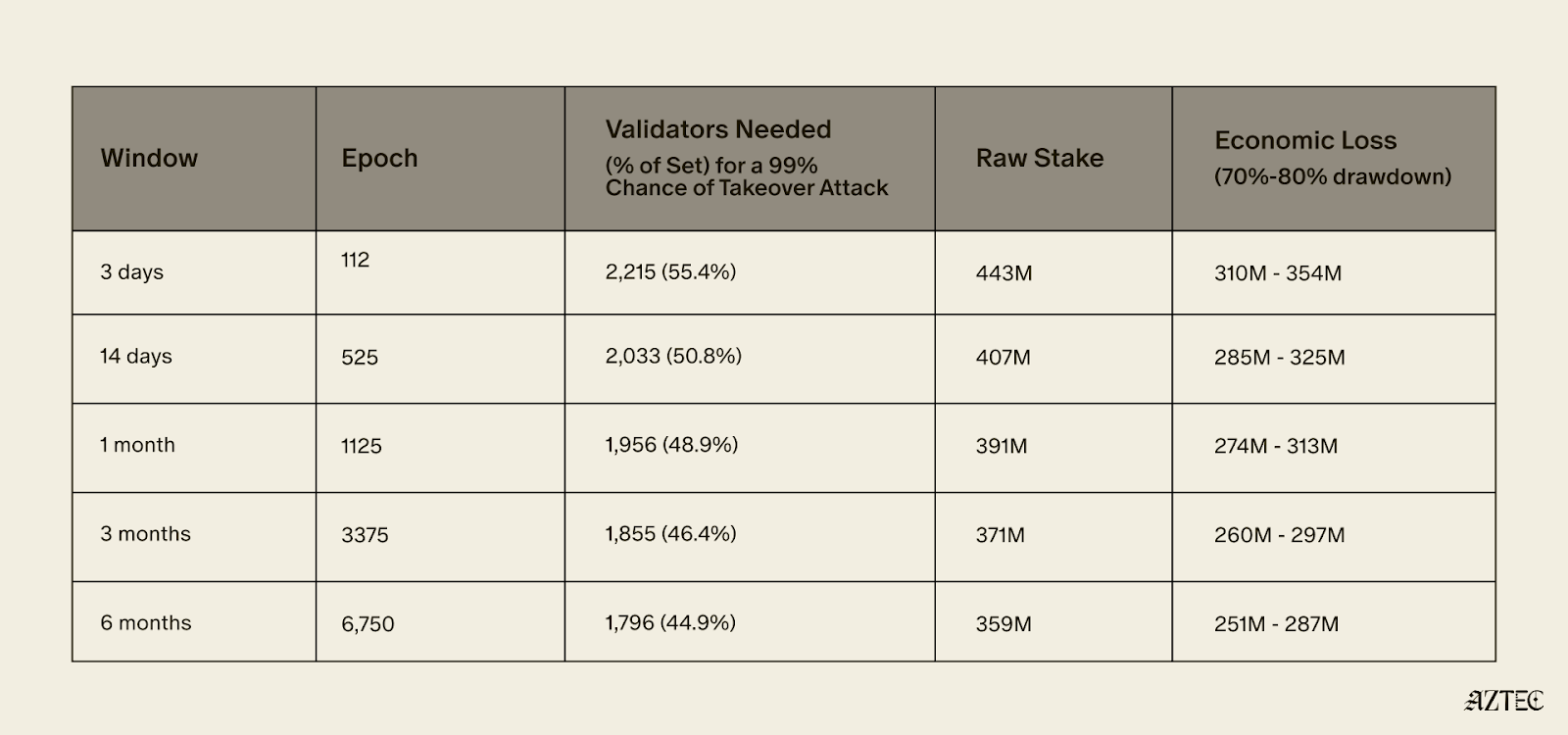

Assuming a sequencer set size of 4,000 and a committee that rotates each epoch (~38.4mins) from the full sequencer set using a Fisher-Yates shuffle seeded by L1 RANDAO we can see the probability and amount of stake required in the table below.

To achieve a 99% probability of controlling at least one supermajority within 3 days, an attacker would need to control approximately 55.4% of the validator set - roughly 2,215 sequencers representing 443M AZTEC in stake. Assuming an exploit is successful their stake would likely de-value by 70-80%, resulting in an expected economic loss of approximately 332M AZTEC.

To achieve only a 0.5% probability of controlling at least one supermajority within 6 months, an attacker would need to control approximately 33.88% of the validator set.

The practical effect of this training wheel is that the network can exist while there are known security issues with the AVM, as long as the value an attacker would gain from any potential exploit is less than the cost of acquiring 332M AZTEC.

The training wheel allows security researchers to spend more time on the private execution paths that don’t benefit from the training wheel and for the network to be deployed in an alpha version where security researchers can attempt to find additional AVM exploits.

In concrete terms, the training wheel means the Alpha network can reasonably secure value up to around 332M AZTEC (~$6.5M at the time of writing).

Ecosystem builders should keep the above limits in mind, particularly when designing portal contracts that bridge funds into the network.

Portals are the main way value will be bridged into the alpha network, and as a result are also the main target for any exploits. The design of portals can allow the network to secure far higher value. If a portal secures > 332M AZTEC and allows all of its funds to be taken in one withdrawal without any rate limits, delays or pause functionality then it is a target for an AVM exploit attack.

If a portal implements a maximum withdrawal per user, pause functionality or delays for larger withdrawals it becomes harder for an attacker to steal a large quantum of funds in one go.

The Aztec Alpha code is ready to go. The next step is for someone in the community to submit a governance proposal and for the network to vote on enabling transactions. This is decentralization working as intended.

Once live, Alpha will run at 1 TPS with roughly 6 second block times. Audits are still ongoing across several components, so keep deposits small and only put in what you're comfortable losing.

On the security side, a 48-validator re-execution committee provides the main protection during Alpha, requiring 33/48 consensus on every 72-second checkpoint. Successfully attacking the AVM would require controlling roughly 55% of the validator set at a cost of around 332M AZTEC, putting the practical security ceiling at approximately $6.5M.

Alpha is about growing the ecosystem, expanding the security of the network, and accumulating the one thing no audit can shortcut: time in production. This is the network maturing in exactly the way it was designed to as it progresses toward Beta.

The Ignition Chain launched late last year, as the first fully decentralized L2 on Ethereum– a huge milestone for decentralized networks. The team has reinvented what true programmable privacy means, building the execution model from the ground up— combining the programmability of Ethereum with the privacy of Zcash in a single execution environment.

Since then, the network has been running with zero downtime with 3,500+ sequencers and 50+ provers across five continents. With the infrastructure now in place, the network is fully in the hands of the community, and the culmination of the past 8 years of work is now converging.

Major upgrades have landed across four tracks: the execution layer, the proving system, the programming language, Noir, and the decentralization stack. Together, these milestones deliver on Aztec’s original promise, a system where developers can write fully programmable smart contracts with customizable privacy.

The infrastructure is in place. The code is ready. And we’re ready to ship.

The execution layer delivers on Aztec's core promise: fully programmable, privacy-preserving smart contracts on Ethereum.

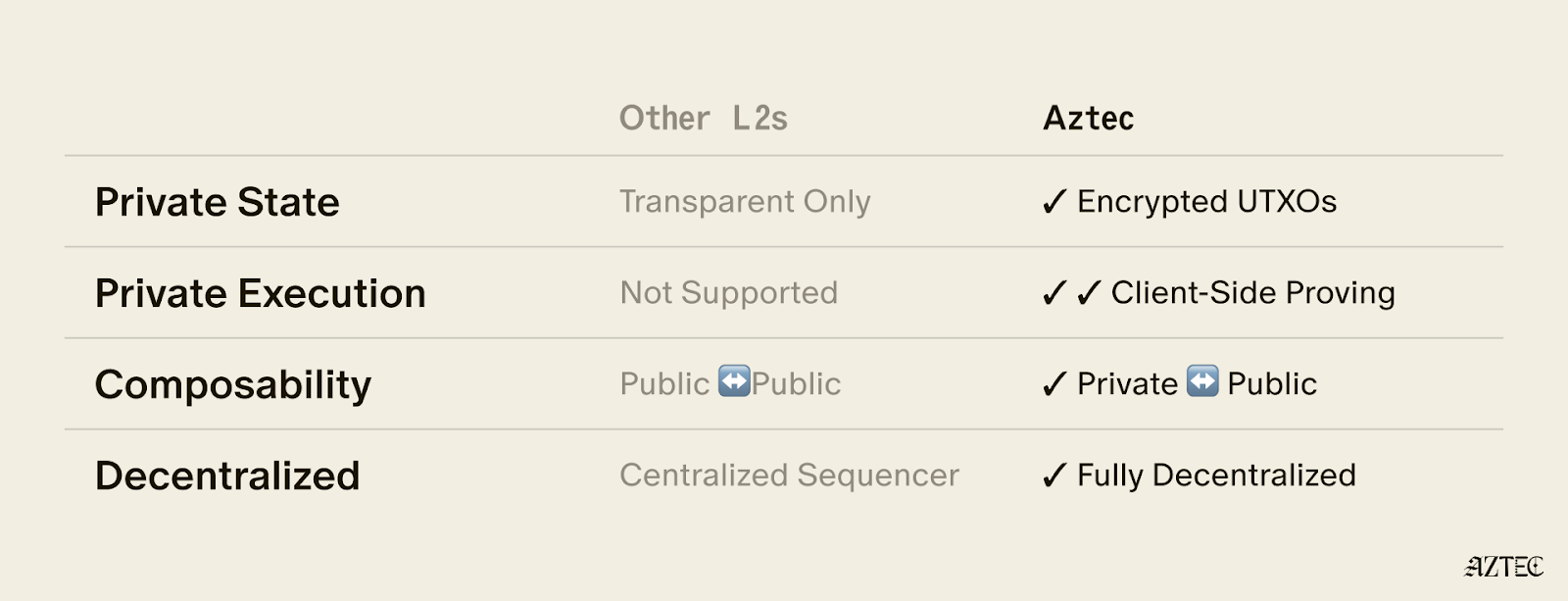

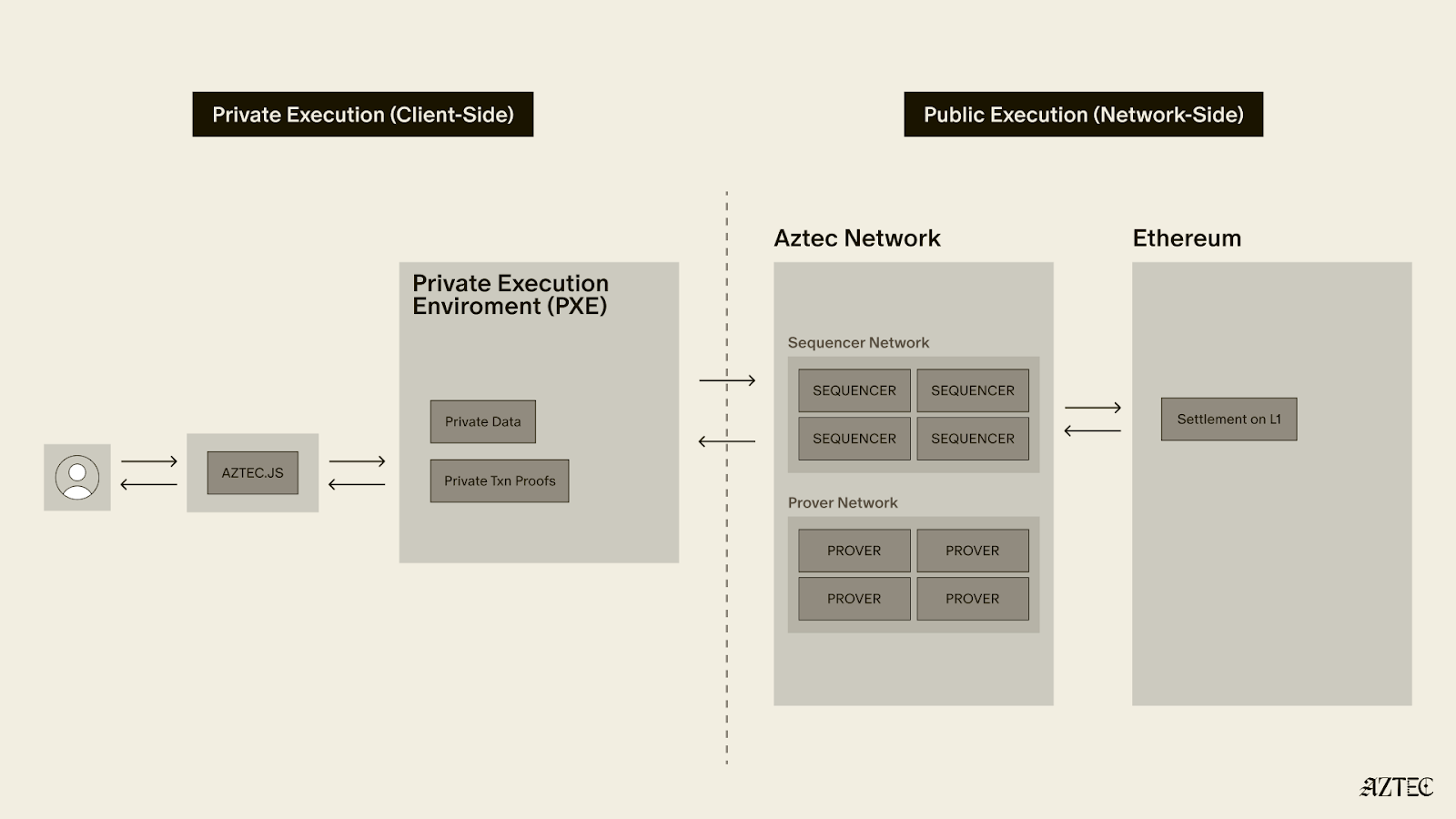

A complete dual state model is now in place–with both private and public state. Private functions execute client-side in the Private Execution Environment (PXE), running directly in the user's browser and generating zero-knowledge proofs locally, so that private data never leaves the original device. Public functions execute on the Aztec Virtual Machine (AVM) on the network side.

Aztec.js is now live, giving developers a full SDK for managing accounts and interacting with contracts. Native account abstraction has been implemented, meaning every account is a smart contract with customizable authentication rules. Note discovery has been solved through a tagging mechanism, allowing recipients to efficiently query for relevant notes without downloading and decrypting everything on the network.

Contract standards are underway, with the Wonderland team delivering AIP-20 for tokens and AIP-721 for NFTs, along with escrow contracts and logic libraries, providing the production-ready building blocks for the Alpha Network.

The proving system is what makes Aztec's privacy guarantees real, and it has deep roots.

In 2019, Aztec's cofounder Zac Williamson and Chief Scientist Ariel Gabizon introduced PLONK, which became one of the most widely used proving systems in zero-knowledge cryptography. Since then, Aztec's cryptographic backend, Barretenberg, has evolved through multiple generations, each facilitating faster, lighter, and more efficient proving than the last. The latest innovation, CHONK (Client-side Highly Optimized ploNK), is purpose-built for proving on phones and browsers and is what powers proof generation for the Alpha Network.

CHONK is a major leap forward for the user experience, dramatically reducing the memory and time required to generate proofs on consumer devices. It leverages best-in-class circuit primitives, a HyperNova-style folding scheme for efficiently processing chains of private function calls, and Goblin, a hyper-efficient purpose-built recursion acceleration scheme. The result is that private transactions can be proven on the devices people actually use, not just powerful servers.

This matters because privacy on Aztec means proofs are generated on the user's own device, keeping private data private. If proving is too slow or too resource-intensive, privacy becomes impractical. CHONK makes it practical.

Decentralization is what makes Aztec's privacy guarantees credible. Without it, a central operator could censor transactions, introduce backdoors, or compromise user privacy at will.

Aztec addressed this by hardcoding decentralized sequencing, proving, and governance directly into the base protocol. The Ignition Chain has proven the stability of this consensus layer, maintaining zero downtime with over 3,500 sequencers and 50+ provers running across five continents. Aztec Labs and the Aztec Foundation run no sequencers and do not participate in governance.

Noir 1.0 is nearing completion, bringing a stable, production-grade language within reach. Aztec's own protocol circuits have been entirely rewritten in Noir, meaning the language is already battle-tested at the deepest layer of the stack.

Internal and external audits of the compiler and toolchain are progressing in parallel, and security tooling including fuzzers and bytecode parsers is nearly finished. A stable, audited language means application teams can build on Alpha with confidence that the foundation beneath them won't shift.

The code for Alpha Network, a functionally complete and raw version of the network, is ready.

The Alpha Network brings fully programmable, privacy-preserving smart contracts to Ethereum for the first time. It's the culmination of years of parallel work across the four tracks in the Aztec Roadmap. Together, they enable efficient client-side proofs that power customizable smart contracts, letting users choose exactly what stays private and what goes public.

No other project in the space is close to shipping this.

The code is written. The network is running. All the pieces are in place. The governance proposal is now live on the forum and open for discussion. Read through it, ask questions, poke holes, and help shape the path forward.

Once the community is aligned, the proposal moves to a vote. This is how a decentralized network upgrades. Not by a team pushing a button, but by the people running it.

Programmable privacy will unlock a renaissance in onchain adoption. Real-world applications are coming and institutions are paying attention. Alpha represents the culmination of eight years of intense work to deliver privacy on Ethereum.

Now it needs to be battle-tested in the wild.

View the updated product roadmap here and join us on Thursday, March 5th, at 3 pm UTC on X to hear more about the most recent updates to our product roadmap.

Alpha is live: a fully feature-complete, privacy-first network. The infrastructure is in place, privacy is native to the protocol, and developers can now build truly private applications.

Nine years ago, we set out to redesign blockchain for privacy. The goal: create a system institutions can adopt while giving users true control of their digital lives. Privacy band-aids are coming to Ethereum (someday), but it’s clear we need privacy now, and there’s an arms race underway to build it. Privacy is complex, it’s not a feature you can bolt-on as an afterthought. It demands a ground-up approach, deep tech stack integration, and complete decentralization.

In November 2025, the Aztec Ignition Chain went live as the first decentralized L2 on Ethereum, it’s the coordination layer that the execution layer sits on top of. The network is not operated by the Aztec Labs or the Aztec Foundation, it’s run by the community, making it the true backbone of Aztec.

With the infrastructure in place and a unanimous community vote, the network enters Alpha.

Alpha is the first Layer 2 with a full execution environment for private smart contracts. All accounts, transactions, and the execution itself can be completely private. Developers can now choose what they want public and what they want to keep private while building with the three privacy pillars we have in place across data, identity, and compute.

These privacy pillars, which can be used individually or combined, break down into three core layers:

Alpha is feature complete–meaning this is the only full-stack solution for adding privacy to your business or application. You build, and Aztec handles the cryptography under the hood.

It’s Composable. Private-preserving contracts are not isolated; they can talk to each other and seamlessly blend both private and public state across contracts. Privacy can be preserved across contract calls for full callstack privacy.

No backdoor access. Aztec is the only decentralized L2, and is launching as a fully decentralized rollup with a Layer 1 escape hatch.

It’s Compliant. Companies are missing out on the benefits of blockchains because transparent chains expose user data, while private networks protect it, but still offer fully customizable controls. Now they can build compliant apps that move value around the world instantly.

Developers can explore our privacy primitives across data, identity, and compute and start building with them using the documentation here. Note that this is an early version of the network with known vulnerabilities, see this post for details. While this is the first iteration of the network, there will be several upgrades that secure and harden the network on our path to Beta. If you’d like to learn more about how you can integrate privacy into your project, reach out here.

To hear directly from our Cofounders, join our live from Cannes Q&A on Tuesday, March 31st at 9:30 am ET. Follow us on X to get the latest updates from the Aztec Network.

The Ignition Chain launched late last year, as the first fully decentralized L2 on Ethereum– a huge milestone for decentralized networks. The team has reinvented what true programmable privacy means, building the execution model from the ground up— combining the programmability of Ethereum with the privacy of Zcash in a single execution environment.

Since then, the network has been running with zero downtime with 3,500+ sequencers and 50+ provers across five continents. With the infrastructure now in place, the network is fully in the hands of the community, and the culmination of the past 8 years of work is now converging.

Major upgrades have landed across four tracks: the execution layer, the proving system, the programming language, Noir, and the decentralization stack. Together, these milestones deliver on Aztec’s original promise, a system where developers can write fully programmable smart contracts with customizable privacy.

The infrastructure is in place. The code is ready. And we’re ready to ship.

The execution layer delivers on Aztec's core promise: fully programmable, privacy-preserving smart contracts on Ethereum.

A complete dual state model is now in place–with both private and public state. Private functions execute client-side in the Private Execution Environment (PXE), running directly in the user's browser and generating zero-knowledge proofs locally, so that private data never leaves the original device. Public functions execute on the Aztec Virtual Machine (AVM) on the network side.

Aztec.js is now live, giving developers a full SDK for managing accounts and interacting with contracts. Native account abstraction has been implemented, meaning every account is a smart contract with customizable authentication rules. Note discovery has been solved through a tagging mechanism, allowing recipients to efficiently query for relevant notes without downloading and decrypting everything on the network.

Contract standards are underway, with the Wonderland team delivering AIP-20 for tokens and AIP-721 for NFTs, along with escrow contracts and logic libraries, providing the production-ready building blocks for the Alpha Network.

The proving system is what makes Aztec's privacy guarantees real, and it has deep roots.

In 2019, Aztec's cofounder Zac Williamson and Chief Scientist Ariel Gabizon introduced PLONK, which became one of the most widely used proving systems in zero-knowledge cryptography. Since then, Aztec's cryptographic backend, Barretenberg, has evolved through multiple generations, each facilitating faster, lighter, and more efficient proving than the last. The latest innovation, CHONK (Client-side Highly Optimized ploNK), is purpose-built for proving on phones and browsers and is what powers proof generation for the Alpha Network.

CHONK is a major leap forward for the user experience, dramatically reducing the memory and time required to generate proofs on consumer devices. It leverages best-in-class circuit primitives, a HyperNova-style folding scheme for efficiently processing chains of private function calls, and Goblin, a hyper-efficient purpose-built recursion acceleration scheme. The result is that private transactions can be proven on the devices people actually use, not just powerful servers.

This matters because privacy on Aztec means proofs are generated on the user's own device, keeping private data private. If proving is too slow or too resource-intensive, privacy becomes impractical. CHONK makes it practical.

Decentralization is what makes Aztec's privacy guarantees credible. Without it, a central operator could censor transactions, introduce backdoors, or compromise user privacy at will.

Aztec addressed this by hardcoding decentralized sequencing, proving, and governance directly into the base protocol. The Ignition Chain has proven the stability of this consensus layer, maintaining zero downtime with over 3,500 sequencers and 50+ provers running across five continents. Aztec Labs and the Aztec Foundation run no sequencers and do not participate in governance.

Noir 1.0 is nearing completion, bringing a stable, production-grade language within reach. Aztec's own protocol circuits have been entirely rewritten in Noir, meaning the language is already battle-tested at the deepest layer of the stack.

Internal and external audits of the compiler and toolchain are progressing in parallel, and security tooling including fuzzers and bytecode parsers is nearly finished. A stable, audited language means application teams can build on Alpha with confidence that the foundation beneath them won't shift.

The code for Alpha Network, a functionally complete and raw version of the network, is ready.

The Alpha Network brings fully programmable, privacy-preserving smart contracts to Ethereum for the first time. It's the culmination of years of parallel work across the four tracks in the Aztec Roadmap. Together, they enable efficient client-side proofs that power customizable smart contracts, letting users choose exactly what stays private and what goes public.

No other project in the space is close to shipping this.

The code is written. The network is running. All the pieces are in place. The governance proposal is now live on the forum and open for discussion. Read through it, ask questions, poke holes, and help shape the path forward.

Once the community is aligned, the proposal moves to a vote. This is how a decentralized network upgrades. Not by a team pushing a button, but by the people running it.

Programmable privacy will unlock a renaissance in onchain adoption. Real-world applications are coming and institutions are paying attention. Alpha represents the culmination of eight years of intense work to deliver privacy on Ethereum.

Now it needs to be battle-tested in the wild.

View the updated product roadmap here and join us on Thursday, March 5th, at 3 pm UTC on X to hear more about the most recent updates to our product roadmap.

Privacy has emerged as a major driver for the crypto industry in 2025. We’ve seen the explosion of Zcash, the Ethereum Foundation’s refocusing of PSE, and the launch of Aztec’s testnet with over 24,000 validators powering the network. Many apps have also emerged to bring private transactions to Ethereum and Solana in various ways, and exciting technologies like ZKPassport that privately bring identity on-chain using Noir have become some of the most talked about developments for ushering in the next big movements to the space.

Underpinning all of these developments is the emerging consensus that without privacy, blockchains will struggle to gain real-world adoption.

Without privacy, institutions can’t bring assets on-chain in a compliant way or conduct complex swaps and trades without revealing their strategies. Without privacy, DeFi remains dominated and controlled by advanced traders who can see all upcoming transactions and manipulate the market. Without privacy, regular people will not want to move their lives on-chain for the entire world to see every detail about their every move.

While there's been lots of talk about privacy, few can define it. In this piece we’ll outline the three pillars of privacy and gives you a framework for evaluating the privacy claims of any project.

True privacy rests on three essential pillars: transaction privacy, identity privacy, and computational privacy. It is only when we have all three pillars that we see the emergence of a private world computer.

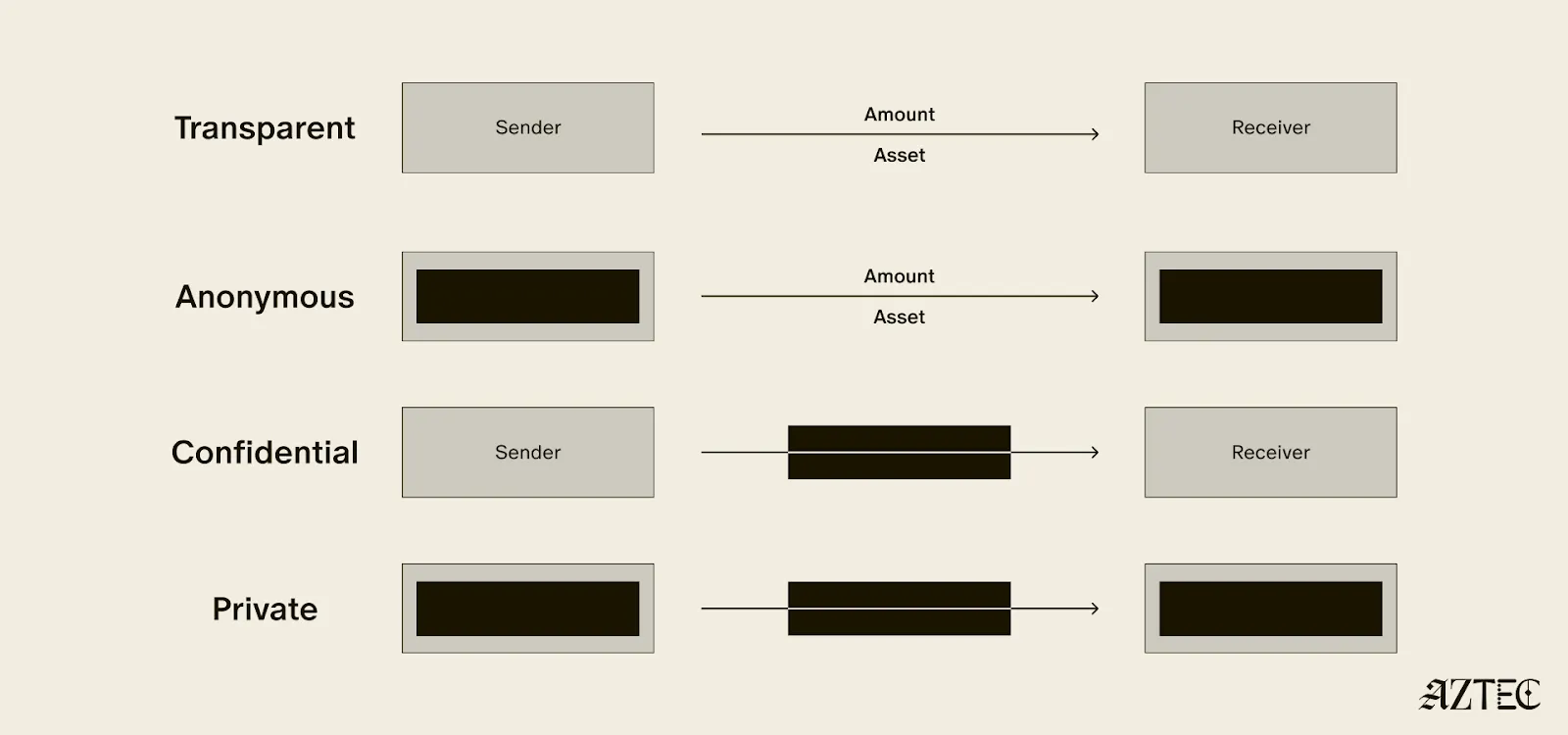

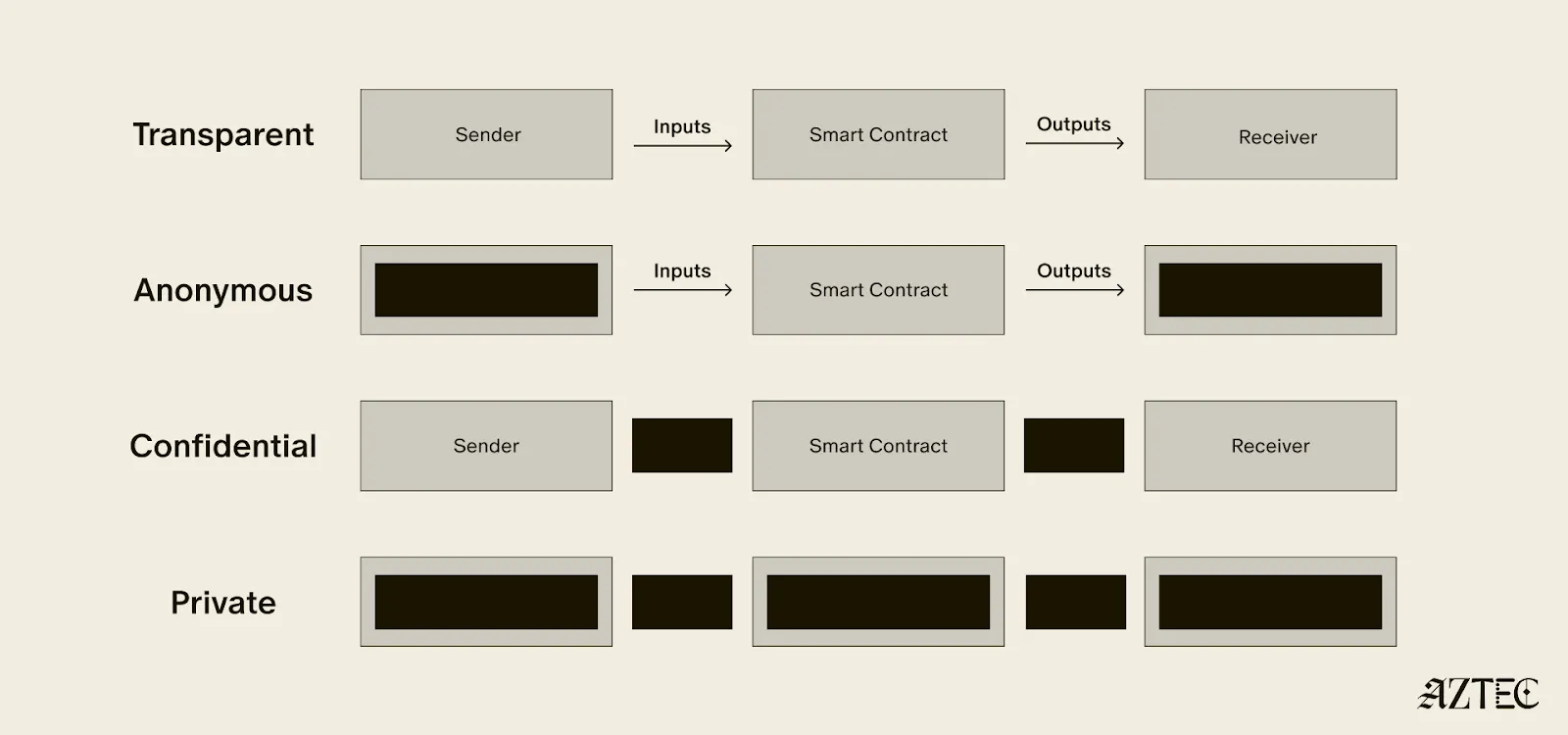

Transaction privacy means that both inputs and outputs are not viewable by anyone other than the intended participants. Inputs include any asset, value, message, or function calldata that is being sent. Outputs include any state changes or transaction effects, or any transaction metadata caused by the transaction. Transaction privacy is often primarily achieved using a UTXO model (like Zcash or Aztec’s private state tree). If a project has only the option for this pillar, it can be said to be confidential, but not private.

Identity privacy means that the identities of those involved are not viewable by anyone other than the intended participants. This includes addresses or accounts and any information about the identity of the participants, such as tx.origin, msg.sender, or linking one’s private account to public accounts. Identity privacy can be achieved in several ways, including client-side proof generation that keeps all user info on the users’ devices. If a project has only the option for this pillar, it can be said to be anonymous, but not private.

Computation privacy means that any activity that happens is not viewable by anyone other than the intended participants. This includes the contract code itself, function execution, contract address, and full callstack privacy. Additionally, any metadata generated by the transaction is able to be appropriately obfuscated (such as transaction effects, events are appropriately padded, inclusion block number are in appropriate sets). Callstack privacy includes which contracts you call, what functions in those contracts you’ve called, what the results of those functions were, any subsequent functions that will be called after, and what the inputs to the function were. A project must have the option for this pillar to do anything privately other than basic transactions.

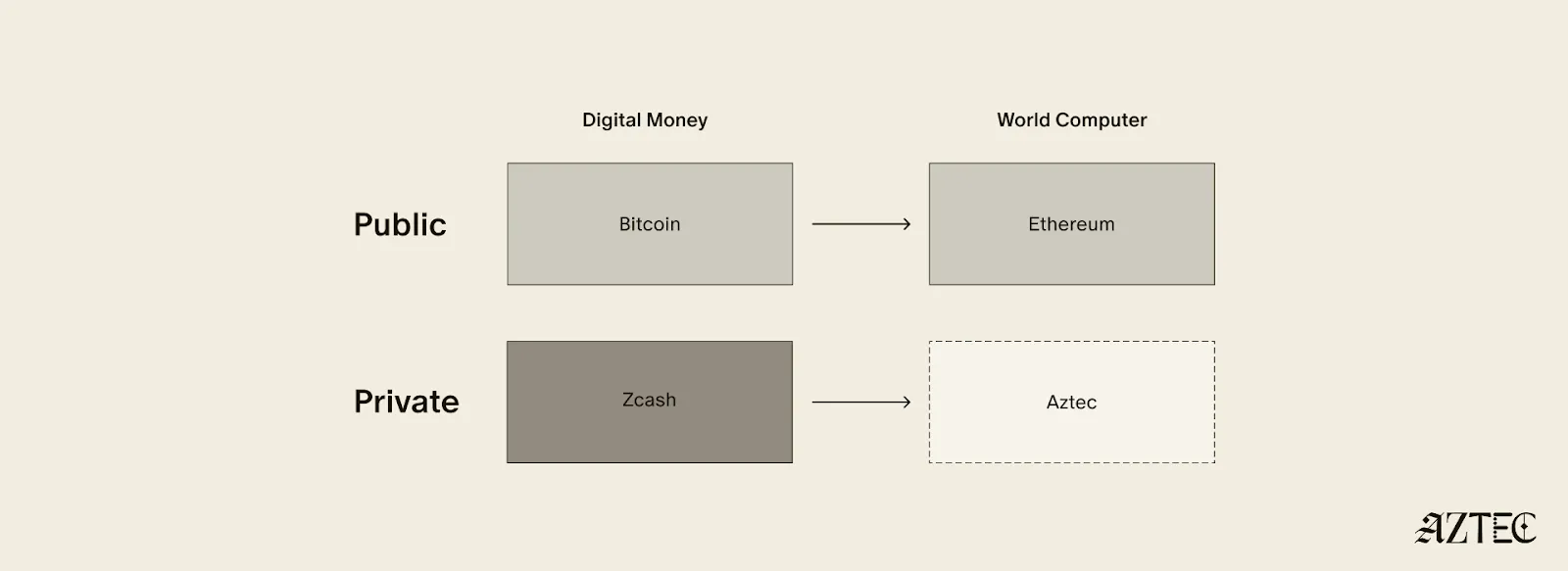

Bitcoin ushered in a new paradigm of digital money. As a permissionless, peer-to-peer currency and store of value, it changed the way value could be sent around the world and who could participate. Ethereum expanded this vision to bring us the world computer, a decentralized, general-purpose blockchain with programmable smart contracts.

Given the limitations of running a transparent blockchain that exposes all user activity, accounts, and assets, it was clear that adding the option to preserve privacy would unlock many benefits (and more closely resemble real cash). But this was a very challenging problem. Zcash was one of the first to extend Bitcoin’s functionality with optional privacy, unlocking a new privacy-preserving UTXO model for transacting privately. As we’ll see below, many of the current privacy-focused projects are working on similar kinds of private digital money for Ethereum or other chains.

Now, Aztec is bringing us the final missing piece: a private world computer.

A private world computer is fully decentralized, programmable, and permissionless like Ethereum and has optional privacy at every level. In other words, Aztec is extending all the functionality of Ethereum with optional transaction, identity, and computational privacy. This is the only approach that enables fully compliant, decentralized applications to be built that preserve user privacy, a new design space that we see as ushering in the next Renaissance for the space.

Private digital money emerges when you have the first two privacy pillars covered - transactions and identity - but you don’t have the third - computation. Almost all projects today that claim some level of privacy are working on private digital money. This includes everything from privacy pools on Ethereum and L2s to newly emerging payment L1s like Tempo and Arc that are developing various degrees of transaction privacy

When it comes to digital money, privacy exists on a spectrum. If your identity is hidden but your transactions are visible, that's what we call anonymous. If your transactions are hidden but your identity is known, that's confidential. And when both your identity and transactions are protected, that's true privacy. Projects are working on many different approaches to implement this, from PSE to Payy using Noir, the zkDSL built to make it intuitive to build zk applications using familiar Rust-like syntax.

Private digital money is designed to make payments private, but any interaction with more complex smart contracts than a straightforward payment transaction is fully exposed.

What if we also want to build decentralized private apps using smart contracts (usually multiple that talk to each other)? For this, you need all three privacy pillars: transaction, identity, and compute.

If you have these three pillars covered and you have decentralization, you have built a private world computer. Without decentralization, you are vulnerable to censorship, privileged backdoors and inevitable centralized control that can compromise privacy guarantees.

What exactly is a private world computer? A private world computer extends all the functionality of Ethereum with optional privacy at every level, so developers can easily control which aspects they want public or private and users can selectively disclose information. With Aztec, developers can build apps with optional transaction, identity, and compute privacy on a fully decentralized network. Below, we’ll break down the main components of a private world computer.

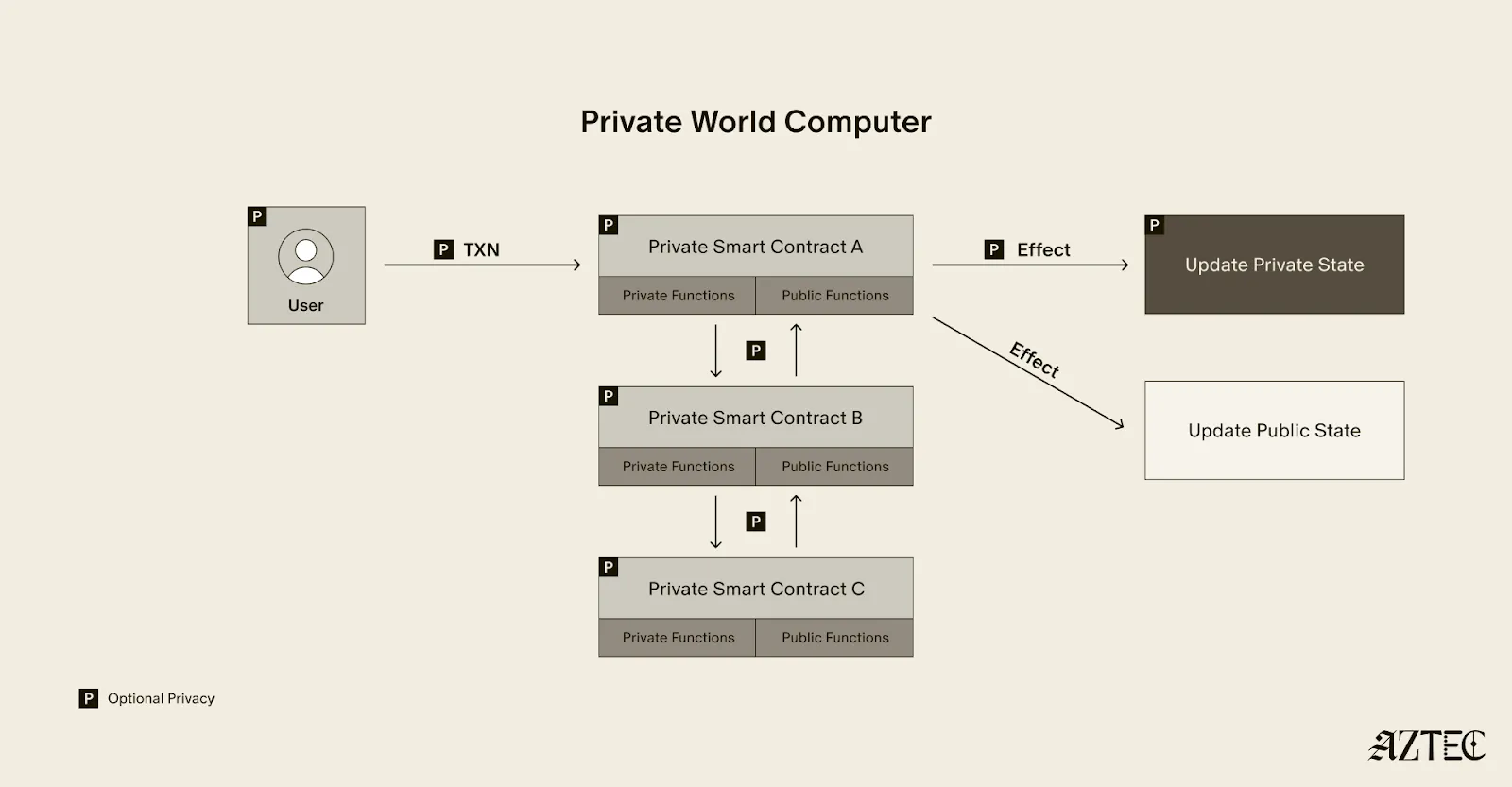

A private world computer is powered by private smart contracts. Private smart contracts have fully optional privacy and also enable seamless public and private function interaction.

Private smart contracts simply extend the functionality of regular smart contracts with added privacy.

As a developer, you can easily designate which functions you want to keep private and which you want to make public. For example, a voting app might allow users to privately cast votes and publicly display the result. Private smart contracts can also interact privately with other smart contracts, without needing to make it public which contracts have interacted.

Transaction: Aztec supports the optionality for fully private inputs, including messages, state, and function calldata. Private state is updated via a private UTXO state tree.

Identity: Using client-side proofs and function execution, Aztec can optionally keep all user info private, including tx.origin and msg.sender for transactions.

Computation: The contract code itself, function execution, and call stack can all be kept private. This includes which contracts you call, what functions in those contracts you’ve called, what the results of those functions were, and what the inputs to the function were.

A decentralized network must be made up of a permissionless network of operators who run the network and decide on upgrades. Aztec is run by a decentralized network of node operators who propose and attest to transactions. Rollup proofs on Aztec are also run by a decentralized prover network that can permissionlessly submit proofs and participate in block rewards. Finally, the Aztec network is governed by the sequencers, who propose, signal, vote, and execute network upgrades.

A private world computer enables the creation of DeFi applications where accounts, transactions, order books, and swaps remain private. Users can protect their trading strategies and positions from public view, preventing front-running and maintaining competitive advantages. Additionally, users can bridge privately into cross-chain DeFi applications, allowing them to participate in DeFi across multiple blockchains while keeping their identity private despite being on an existing transparent blockchain.

This technology makes it possible to bring institutional trading activity on-chain while maintaining the privacy that traditional finance requires. Institutions can privately trade with other institutions globally, without having to touch public markets, enjoying the benefits of blockchain technology such as fast settlement and reduced counterparty risk, without exposing their trading intentions or volumes to the broader market.

Organizations can bring client accounts and assets on-chain while maintaining full compliance. This infrastructure protects on-chain asset trading and settlement strategies, ensuring that sophisticated financial operations remain private. A private world computer also supports private stablecoin issuance and redemption, allowing financial institutions to manage digital currency operations without revealing sensitive business information.

Users have granular control over their privacy settings, allowing them to fine-tune privacy levels for their on-chain identity according to their specific needs. The system enables selective disclosure of on-chain activity, meaning users can choose to reveal certain transactions or holdings to regulators, auditors, or business partners while keeping other information private, meeting compliance requirements.

The shift from transparent blockchains to privacy-preserving infrastructure is the foundation for bringing the next billion users on-chain. Whether you're a developer building the future of private DeFi, an institution exploring compliant on-chain solutions, or simply someone who believes privacy is a fundamental right, now is the time to get involved.

Follow Aztec on X to stay updated on the latest developments in private smart contracts and decentralized privacy technology. Ready to contribute to the network? Run a node and help power the private world computer.

The next Renaissance is here, and it’s being powered by the private world computer.

Aztec’s Public Testnet launched in May 2025.

Since then, we’ve been obsessively working toward our ultimate goal: launching the first fully decentralized privacy-preserving layer-2 (L2) network on Ethereum. This effort has involved a team of over 70 people, including world-renowned cryptographers and builders, with extensive collaboration from the Aztec community.

To make something private is one thing, but to also make it decentralized is another. Privacy is only half of the story. Every component of the Aztec Network will be decentralized from day one because decentralization is the foundation that allows privacy to be enforced by code, not by trust. This includes sequencers, which order and validate transactions, provers, which create privacy-preserving cryptographic proofs, and settlement on Ethereum, which finalizes transactions on the secure Ethereum mainnet to ensure trust and immutability.

Strong progress is being made by the community toward full decentralization. The Aztec Network now includes nearly 1,000 sequencers in its validator set, with 15,000 nodes spread across more than 50 countries on six continents. With this globally distributed network in place, the Aztec Network is ready for users to stress test and challenge its resilience.

We're now entering a new phase: the Adversarial Testnet. This stage will test the resilience of the Aztec Testnet and its decentralization mechanisms.

The Adversarial Testnet introduces two key features: slashing, which penalizes validators for malicious or negligent behavior in Proof-of-Stake (PoS) networks, and a fully decentralized governance mechanism for protocol upgrades.

This phase will also simulate network attacks to test its ability to recover independently, ensuring it could continue to operate even if the core team and servers disappeared (see more on Vitalik’s “walkaway test” here). It also opens the validator set to more people using ZKPassport, a private identity verification app, to verify their identity online.

The Aztec Network testnet is decentralized, run by a permissionless network of sequencers.

The slashing upgrade tests one of the most fundamental mechanisms for removing inactive or malicious sequencers from the validator set, an essential step toward strengthening decentralization.

Similar to Ethereum, on the Aztec Network, any inactive or malicious sequencers will be slashed and removed from the validator set. Sequencers will be able to slash any validator that makes no attestations for an entire epoch or proposes an invalid block.

Three slashes will result in being removed from the validator set. Sequencers may rejoin the validator set at any time after getting slashed; they just need to rejoin the queue.

In addition to testing network resilience when validators go offline and evaluating the slashing mechanisms, the Adversarial Testnet will also assess the robustness of the network’s decentralized governance during protocol upgrades.

Adversarial Testnet introduces changes to Aztec Network’s governance system.

Sequencers now have an even more central role, as they are the sole actors permitted to deposit assets into the Governance contract.

After the upgrade is defined and the proposed contracts are deployed, sequencers will vote on and implement the upgrade independently, without any involvement from Aztec Labs and/or the Aztec Foundation.

Starting today, you can join the Adversarial Testnet to help battle-test Aztec’s decentralization and security. Anyone can compete in six categories for a chance to win exclusive Aztec swag, be featured on the Aztec X account, and earn a DappNode. The six challenge categories include:

Performance will be tracked using Dashtec, a community-built dashboard that pulls data from publicly available sources. Dashtec displays a weighted score of your validator performance, which may be used to evaluate challenges and award prizes.

The dashboard offers detailed insights into sequencer performance through a stunning UI, allowing users to see exactly who is in the current validator set and providing a block-by-block view of every action taken by sequencers.

To join the validator set and start tracking your performance, click here. Join us on Thursday, July 31, 2025, at 4 pm CET on Discord for a Town Hall to hear more about the challenges and prizes. Who knows, we might even drop some alpha.

To stay up-to-date on all things Noir and Aztec, make sure you’re following along on X.

Aztec will be a fully decentralized, permissionless and privacy-preserving L2 on Ethereum. The purpose of Aztec’s Public Testnet is to test all the decentralization mechanisms needed to launch a strong and decentralized mainnet. In this post, we’ll explore what full decentralization means, how the Aztec Foundation is testing each aspect in the Public Testnet, and the challenges and limitations of testing a decentralized network in a testnet environment.

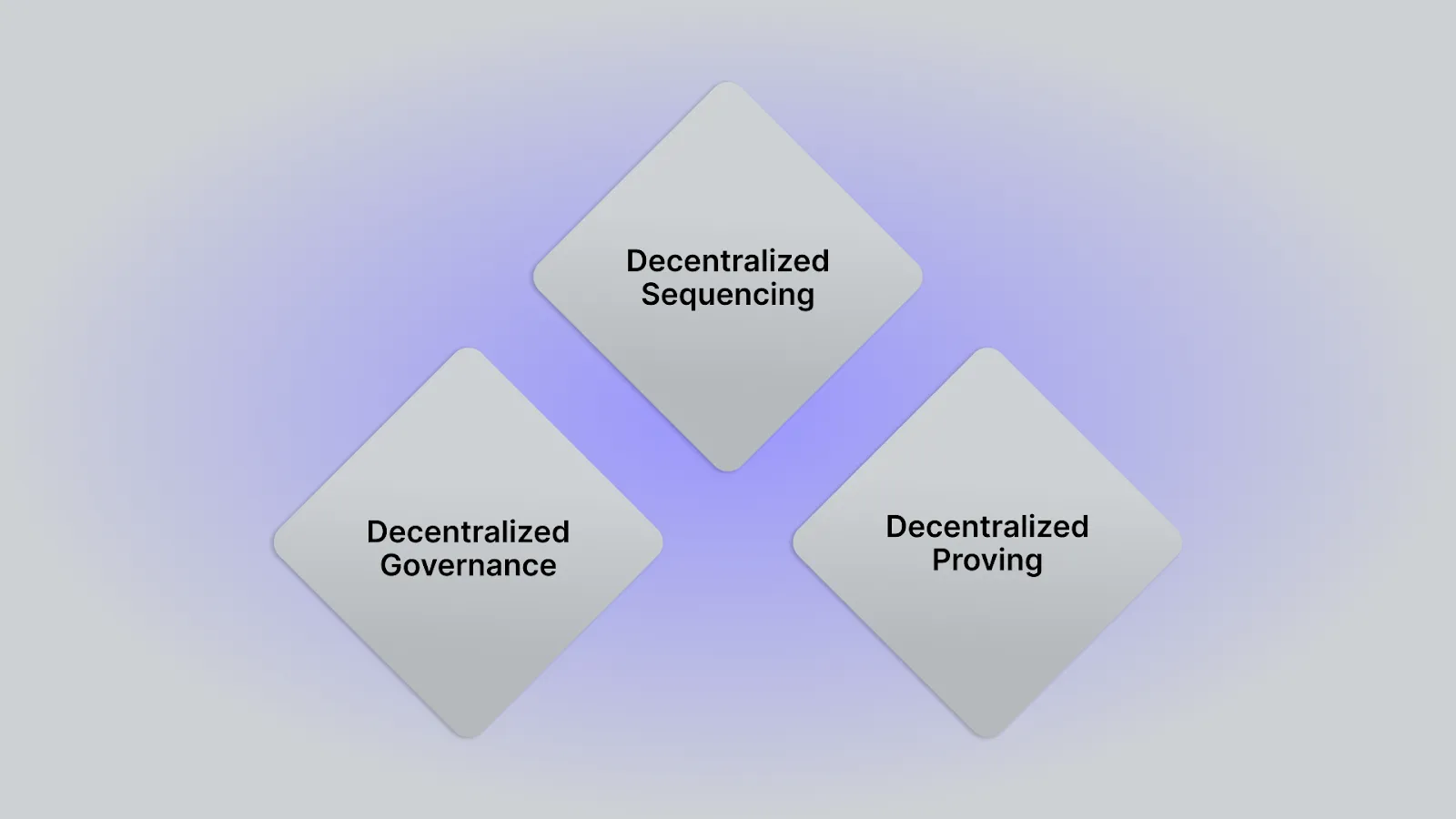

Three requirements must be met to achieve decentralization for any zero-knowledge L2 network:

Decentralization across sequencing, proving, and governance is essential to ensure that no single party can control or censor the network. Decentralized sequencing guarantees open participation in block production, while decentralized proving ensures that block validation remains trustless and resilient, and finally, decentralized governance empowers the community to guide network evolution without centralized control.

Together, these pillars secure the rollup’s autonomy and long-term trustworthiness. Let’s explore how Aztec’s Public Testnet is testing the implementation of each of these aspects.

Aztec will launch with a fully decentralized sequencer network.

This means that anyone can run a sequencer node and start sequencing transactions, proposing blocks to L1 and validating blocks built by other sequencers. The sequencer network is a proof-of-stake (PoS) network like Ethereum, but differs in an important way. Rather than broadcasting blocks to every sequencer, Aztec blocks are validated by a randomly chosen set of 48 sequencers. In order for a block to be added to the L2 chain, two-thirds of the sequencers need to verify the block. This offers users fast preconfirmations, meaning the Aztec Network can sequence transactions faster while utilizing Ethereum for final settlement security.

PoS is fundamentally an anti-sybil mechanism—it works by giving economic weight to participation and slashing malicious actors. At the time of Aztec’s mainnet, this will allow sequencers to vote out bad actors and burn their staked assets. On the Public Testnet, where there are no real economic incentives, PoS doesn't function properly. To address this, we introduced a queue system that limits how quickly new sequencers can join, helping to maintain network health and giving the network time to react to potential malicious behavior.

Behind the scenes, a contract handles sequencer onboarding—it mints staking assets, adds sequencers to the set, and can remove them if necessary. This contract is just for Public Testnet and will be removed on Mainnet, allowing us to simulate and test the decentralized sequencing mechanisms safely.

Aztec will also launch with a fully decentralized prover network.

Provers generate cryptographic proofs that verify the correctness of public transactions, culminating in a single rollup proof submitted to Ethereum. Decentralized proving reduces centralization risk and liveness failures, but also opens up a marketplace to incentivize fast and efficient proof generation. The proving client developed by Aztec Labs involves three components:

Once the final proof has been computed, the proving node sends the proof to L1 for verification. The Aztec Network splits proving rewards amongst everyone who submits a proof on time, reducing centralization risk where one entity with large compute dominates the network.

For Aztec’s Public Testnet, anyone can spin up a prover node and start generating proofs. Running a prover node is more hardware-intensive than running a sequencer node, requiring ~40 machines with an estimated 16 cores and 128GB RAM each. Because running provers can be cost-intensive and incur the same costs on a testnet as it will on mainnet, Aztec’s Public Testnet will throttle transactions to 0.2 per second (TPS).

Keeping transaction volumes low allows us to test a fully decentralized prover network without overwhelming participating provers with high costs before real network incentives are in place.

Finally, Aztec will launch with fully decentralized governance.

In order for network upgrades to occur, anyone can put forward a proposal for sequencers to consider. If a majority of sequencers signal their support, the proposal gets sent to a vote. Once it passes the vote, anyone can execute the script that will implement the upgrade. Note: For this testnet, the second phase of voting will be skipped.

Decentralized governance is an important step in enabling anyone to participate in shaping the future of the network. The goal of the public testnet is to ensure the mechanisms are functioning properly for sequencers to permissionlessly join and control the Aztec Network from day 1.

One additional aspect to consider with regard to full decentralization is the role of network users in decentralizing the compute load of the network.

Aztec Labs has developed groundbreaking technology to make end-to-end programmable privacy possible. First with the release of Plonk, and later refinements like MegaHonk, which make it feasible to generate client-side ZKPs. Client-side proofs keep sensitive data on the user’s device while still enabling users to interact with and store this information privately onchain. They also help to scale throughput by pushing execution to users. This decentralizes the compute requirements and means users can execute arbitrary logic in their private functions.

Sequencers and provers on the Aztec Network are never able to see any information that users or applications want to keep private, including accounts, activity, balances, function execution, or other data of any kind.

Aztec’s Public Testnet is shipping with a full execution environment, including the ability to create client-side proofs in the browser. Here are some time estimations to expect for generating private, client-side proofs:

Aztec’s Public Testnet is designed to rigorously test decentralization across sequencing, proving, and governance ahead of our mainnet launch. The network design ensures no single entity can control or censor activity, empowering anyone to participate in sequencing transactions, generating proofs, and proposing governance changes.

Visit the Aztec Testnet page to start building with programmable privacy and join our community on Discord.

When Aztec mainnet launches, it will be the first fully private and decentralized L2 on Ethereum. Getting here was a long road: when Aztec started eight years ago, the initial plan was to build an onchain financial service called CreditMint for issuing corporate debt to mid-market enterprises – obviously a distant use case from how we understand Aztec today. When co-founders Zac Williamson, Joe Andrews, Tom Pocock, and Arnaud Schenk, got started, the world of zero-knowledge proving systems and applications weren’t even in their infancy: there was no PLONK, no Noir, no programmable privacy, and it wasn’t clear that demand for onchain privacy was even strong enough to necessitate a new blockchain network. The founders’ initial explorations through CreditMint led to what we know as Aztec today.

While putting corporate debt onchain might seem unglamorous (or just limited compared with how we now understand Aztec’s capabilities), it was useful, wildly popular, and necessary for the founding team to realized that no serious institution wanted to touch the blockchain without the same privacy assurances that they were accustomed to in the corporate world. Traditional finance is built around trusted intermediaries and middlemen, which of course introduces friction and bottlenecks progress – but offers more privacy assurances than what you see on public blockchains like Ethereum.

This takeaway led to a bigger understanding: the number of people (not just the number of institutions) who wanted to use the blockchain was limited by a lack of programmable privacy. Aztec was born out of the recognition that everyone – not only corporations – could use permissionless, onchain systems for private transactions, and this could become the default for all online payments. In the words of the CEO, Zac Williamson:

“If you had programmable digital money that had privacy guarantees around it, you could use that to create extremely fast permissionless payment channels for payments on the internet.”

Equipped with this understanding, Zac and Joe began to specialize. Zac, whose background is in particle physics, went deep on cryptography research and began exploring protocols that could be used to enable onchain privacy. Meanwhile, Joe worked on how to get user adoption for privacy tech, while Arnaud focused on getting the initial CreditMint platform live and recruiting early members of the team. In 2018, Aztec published a proof-of-concept transaction demonstrating the creation and transfer of private assets on Ethereum – using an early cryptographic protocol that predated modern proving schemes like PLONK. It was a limited example, with just DAI as the test-case (and it could only facilitate private assets, not private identities), but it garnered a lot of early interest from members of the Ethereum community.

The 2018 version of the Aztec Protocol had three key limitations: it wasn’t programmable, it only supported private data (rather than private data and user-level privacy), and it was expensive, from both a computation and gas perspective. The underlying proving scheme was, in the words of Zac, a “Frankenstein cryptography protocol using older primitives than zk-SNARKs.” These limitations motivated the development of PLONK in 2019, a SNARK-based proving system that is computationally inexpensive, and only requires one universal trusted setup.

A single universal trusted setup is desirable because it allows developers to utilize a common reference string for all of the programs they might want to instantiate in a circuit; the alternative is a much more cumbersome process of conducting a trusted setup ceremony for each cryptographic circuit. In other words, PLONK enabled programmable privacy for future versions of Aztec.

PLONK was a big breakthrough, not just for Aztec, but for the wider blockchain community. Today, PLONK has been implemented and extended by teams like zkSync, Polygon, Mina, and more. There is even an entire category of proving systems called PLONKish that all derive from the original 2019 paper. For Aztec specifically, PLONK was also instrumental in paving the way for zk.money and Aztec Connect, a private payment network and private DeFi rollup, which launched in 2021 and 2022 respectively.

The product needs of Aztec motivated the development of a modern-day proving system. PLONK proofs are computationally cheap to generate, leading not only to lower transaction costs and programmability for developers, but big steps forward for privacy and decentralization. PLONK made it simpler to generate client-side proofs on inexpensive hardware. In the words of Joe, “PLONK [was] developed to keep the middleman away.”

Between 2021 and 2023, the Aztec team operated zk.money and Aztec Connect. The products were not only vital in illustrating that there was a demand for onchain privacy solutions, but in demonstrating that it was possible to build performant and private networks leveraging PLONK. Joe remarked that they “wanted to test that we could build a viable payments network, where the user experience was on par with a public transaction. Privacy needed to be in the background.”

Aztec’s early products indicated that there was significant demand for private onchain payments and DeFi – at peak, the rollups had over $20 million in TVL. Both products fit into the vision Zac had to “make the blockchain real.” In his team’s eyes, blockchains are held back from mainstream adoption because you can’t bring consequential, real-world assets onchain without privacy.

Despite the demand for these networks, the team made the decision to sunset both zk.money and Aztec Connect after recognizing that they could not fully decentralize the networks without massive architectural changes. Zac and Joe don’t believe in “Progressive Decentralization” – the network needs to have no centralized operators from day one. And it wasn’t just the sequencer of these early Aztec products that were centralized – the team also recognized that it would have been impossible for other developers to write programs on Aztec that could compose with each other, because all programs operated on shared state. In 2023, zk.money and Aztec Connect were officially shut down.

In tandem, the team also began developing Noir (an original brainchild of Kevaundray Wedderbaum). Noir is a Rust-like programming language for writing zero-knowledge circuits that makes privacy technology accessible to mainstream developers. While Noir began as a way to make it easier for developers to write private programs without needing to know cryptography, the team soon realized that the demand for privacy didn’t just apply to applications on the Aztec stack, and that Noir could be a general-purpose DSL for any kind of application that needs to leverage privacy. In the same way that bringing consequential assets and activity onchain “makes the blockchain real,” bringing zero-knowledge technology to any application – onchain or offchain – makes privacy real. The team continued working on Noir, and it has developed into its own product stack today.

Aztec from 2017 to 2024 can be seen as a methodical journey toward building a fully private, programmable, and decentralized blockchain network. The earliest attempt at Aztec as a protocol introduced asset-level privacy, without addressing user-level privacy, or significant programmability. PLONK paved the way for user-level privacy and programmability, which yielded zk.money and Aztec Connect. Noir extended programmability even further, making it easy for developers to build applications in zero-knowledge. But zk.money and Aztec Connect were incomplete without a viable path to decentralization. So, the team decided to build a new network from scratch. Extending on their learnings from past networks, the foundations and findings from continuous R&D efforts of PLONK, and the growing developer community around Noir, they set the stage for Aztec mainnet.

The fact of the matter is that creating a network that is fully private and decentralized is hard. To have privacy, all data must be shielded cheaply inside of a SNARK. If you want to really embrace the idea of “making the blockchain real” then you should also be able to leverage outside authentication and identity solutions, like Apple ID – and you need to be able to put those technologies inside of a SNARK as well. The number of statements that need to be represented as provable circuits is massive. Then, all of these capabilities need to run inside of a network that is decentralized. The combination of mathematical, technological, and networking problems makes this very difficult to achieve

The technical architecture of Aztec reflects the learnings of the Aztec team. Zac describes Aztec mainnet as a “Russian nesting doll” of products that all add up to a private and decentralized network. Aztec today consists of:

At the network level, there will be many participants in the decentralization efforts of Aztec: provers, sequencers, and node operators. Joe views the infrastructure-level decentralization as a crucial first stage of Aztec’s mainnet launch.

As Aztec goes live, the vision extends beyond private transactions to enabling entirely new categories of applications. The team envisions use cases ranging from consumer lending based on private credit scores to games leveraging information asymmetry, to social applications that preserve user privacy. The next phase will focus on building a robust ecosystem of developers and the next generation of applications on Ethereum using Noir, the universal language of privacy.

Aztec mainnet marks the emergence of applications that weren't possible before – applications that combine the transparency and programmability of blockchain with the privacy necessary for real-world adoption.

Many thanks to Remi Gai, Hannes Huitula, Giacomo Corrias, Avishay Yanai, Santiago Palladino, ais, ji xueqian, Brecht Devos, Maciej Kalka, Chris Bender, Alex, Lukas Helminger, Dominik Schmid, 0xCrayon, Zac Williamson for inputs, discussions, and reviews.

Contents

Prerequisites:

Buzzwords are dangerous. They amuse and fascinate as cutting-edge, innovative, mesmerizing markers of new ideas and emerging mindsets. Even better if they are abbreviations, insider shorthand we can use to make ourselves look smarter and more progressive:

Using buzzwords can obfuscate the real scope and technical possibilities of technology. Furthermore, buzzwords might act as a gatekeeper making simple things look complex, or on the contrary, making complex things look simple (according to the Dunning-Kruger effect).

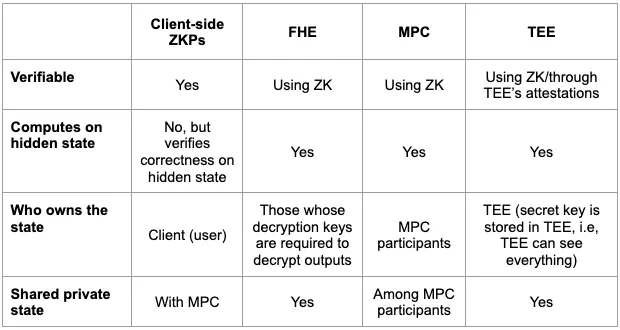

In this article, we will briefly review several suggested privacy-related abbreviations, their strong points, and their constraints. And after that, we’ll think about whether someone will benefit from combining them or not. We’ll look at different configurations and combinations.

Disclaimer: It’s not fair to compare the technologies we’re discussing since it won’t be an apples-to-apples comparison. The goal is to briefly describe each of them, highlighting their strong and weak points. Understanding this, we will be able to make some suggestions about combining these technologies in a meaningful way.

POV: a new dev enters the space.

Client-side ZKP is a specific category of zero-knowledge proofs (started in 1989). The exploration of general ZKPs in great depth is out-of-scope for this piece. If you're curious to learn about it, check this article.

Essentially, zero-knowledge protocol allows one party (prover) to prove to another party (verifier) that some given statement is true, while avoiding conveying any information beyond the mere fact of that statement's truth.

Client-side ZKPs enable generation of the proof on a user's device for the sake of privacy. A user makes some arbitrary computations and generates proof that whatever they computed was computed correctly. Then, this proof can be verified and utilized by external parties.

One of the most widely known use cases of the client-side ZKPs is a privacy preserving L2 on Ethereum where, thanks to client-side data processing, some functions and values in a smart-contract can be executed privately, while the rest are executed publicly. In this case, the client-side ZKP is generated by the user executing the transaction, then verified by the network sequencer.

However, client-side proof generation is not limited to Ethereum L2s, nor to blockchain at all. Whenever there are two or more parties who want to compute something privately and then verify each other’s computation and utilize their results for some public protocols, client-side ZKPs will be a good fit.

Check this article for more details on how client-side ZKPs work.

The main concern today about on-chain privacy by means of client-side proof generation is the lack of a private shared state. Potentially, it can be mitigated with an MPC committee (which we will cover in later sections).

Speaking of limitations of client-side proving, one should consider:

What can we do with client-side ZKPs today:

Whom to follow for client-side ZKPs updates: Aztec Labs, Miden, Aleo.

Disclaimer: in this section, we discuss general-purpose MPC (i.e. allowing computations on arbitrary functions). There are also a bunch of specialized MPC protocols optimized for various use cases (i.e. designing customized functions) but those are out-of-scope for this article.

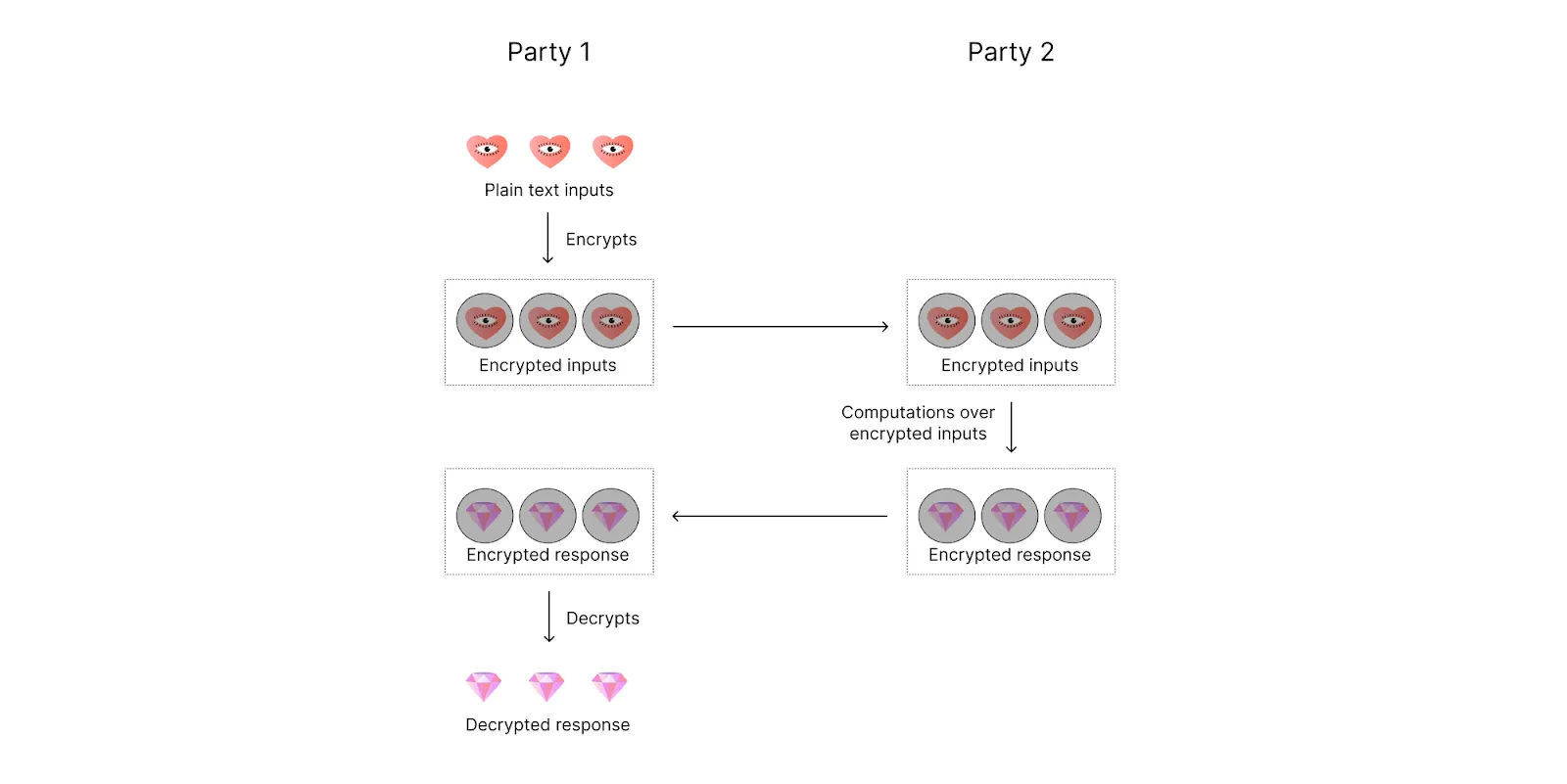

MPC enables a set of parties to interact and compute a joint function of their private inputs while revealing nothing but the output: f(input_1, input_2, …, input_n) → output.

For example, parties can be servers that hold a distributed database system and the function can be the database update. Or parties can be several people jointly managing a private key from an Ethereum account and the function can be a transaction signing mechanism.

One issue of concern with MPCs is that one or more parties participating in the protocol can be malicious. They can try to:

Hence in the context of MPC security, one wants to ensure that:

To think about MPC security in an exhaustive way, we should consider three perspectives:

Rather than requiring all parties in the computation to remain honest, MPC tolerates different levels of corruption depending on the underlying assumptions. Some models remain secure if less than 1/3 of parties are corrupt, some if less than 1/2 are corrupt, and some even have security guarantees even in the case that more than half of the parties are corrupt. For details, formal definition, and proof of MPC protocol security, check this paper.

There are three main corruption strategies:

Each of these assumptions will assume a different security model.

Two definitions of malicious behavior are:

When it comes to the definition of privacy, MPC guarantees that the computation process itself doesn’t reveal any information. However, it doesn’t guarantee that the output won’t reveal any information. For an extreme example, consider two people computing the average of their salaries. While it’s true that nothing but the average will be output, when each participant knows their own salary amount and the average of both salaries, they can derive the exact salary of the other person.

That is to say, while the core “value proposition” of MPC seems to be very attractive for a wide range of real world use cases, a whole bunch of nuances should be taken into account before it will actually provide a high enough security level. (It's important to clarify the problem statement and decide whether it is the right tool for this particular task.)

What can be done with MPC protocols today:

When we think about MPC performance, we should consider the following parameters: number of participating parties, witness size of each party, and function complexity.

When it comes to using MPC in blockchain context, it’s important to consider message complexity, computational complexity, and such properties as public verifiability and abort identifiability (i.e. if a malicious party causes the protocol to prematurely halt, then they can be detected). For message distribution, the protocol relies either on P2P channels between each two parties (requires a large bandwidth) or broadcasting. Another concern arises around the permissionless nature of blockchain since MPC protocols often operate over permissioned sets of nodes.

Taking into account all that, it’s clear that MPC is a very nuanced technology on its own. And it becomes even more nuanced when combined with other technologies. Adding MPC to a specific blockchain protocol often requires designing a custom MPC protocol that will fit. And that design process often requires a room full of MPC PhDs who can not only design but also prove its security.

Whom to follow for MPC updates: dWallet Labs, TACEO, Fireblocks, Cursive, PSE, Fairblock, Soda Labs, Silence Laboratories, Nillion.

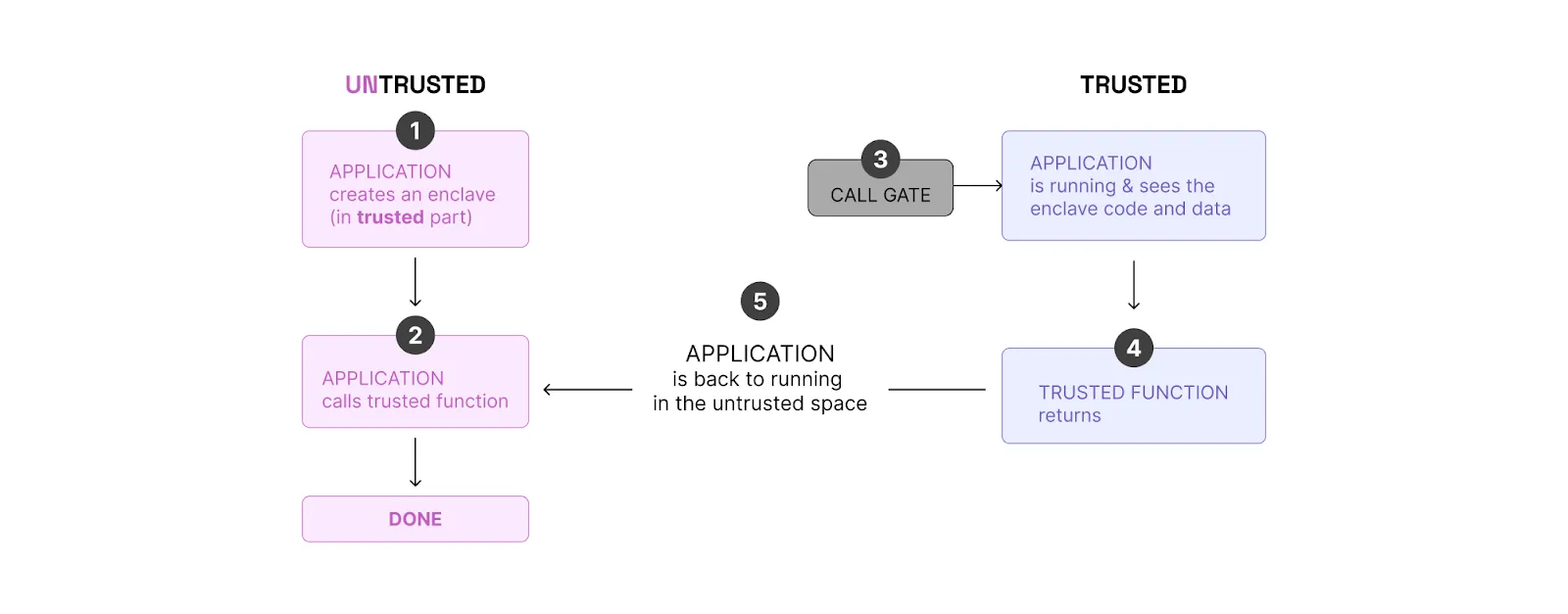

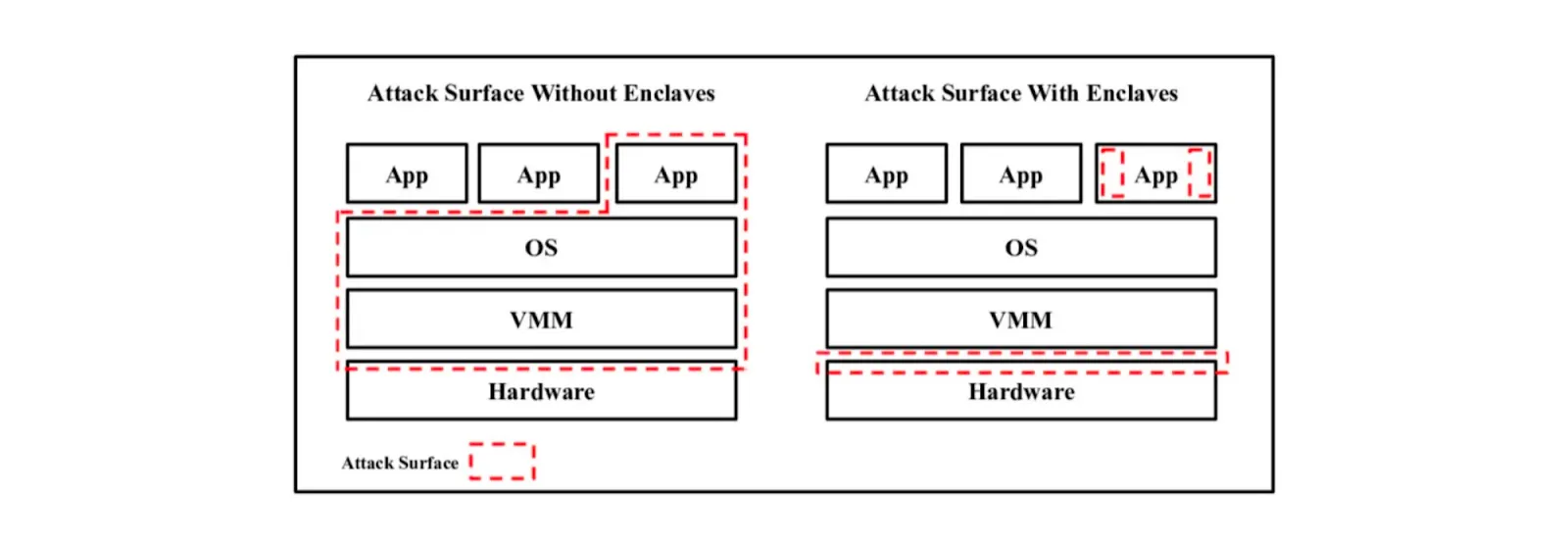

TEE stands for Trusted Execution Environment. TEE is an area on the main processor of a device that is separated from the system's main operating system (OS). It ensures data is stored, processed, and protected in a separate environment. One of the most widely known units of TEE (and one we often mention when discussing blockchain) is Software Guard Extensions (SGX) made by Intel.

SGX can be considered a type of private execution. For example, if a smart contract is run inside SGX, it’s executed privately.

SGX creates a non-addressable memory region of code and data (separated from RAM), and encrypts both at a hardware level.

How SGX works:

It’s worth noting that there is a key pair: a secret key and a public key. The secret key is generated inside of the enclave and never leaves it. The public key is available to anyone: Users can encrypt a message using a public key so only the enclave can decrypt it.

An SGX feature often utilized in the blockchain context is attestations. Attestation is the process of demonstrating that a software executable has been properly instantiated on a platform. Remote Attestation allows a remote party to be confident that the intended software is securely running within an enclave on a fully patched, Intel SGX-enabled platform.

Core SGX concerns:

Speaking of SGX cost, the proof generation cost can be considered free of charge. Though if one wants to use remote attestations, the initial one-time cost (once per SGX prover) for it is in the order of 1M gas (to make sure the code in SGX is running in the expected way).

Onchain verification cost equals to verifying an ECDSA signature (~5k gas while for ZK signature verification will cost ~300k gas).

When it comes to execution time, there is effectively no overhead. For example, for proving a zk-rollup block, it will be around 100ms.

Where SGX is utilized in blockchain today:

Whom to follow for TEE updates: Secret Network, Flashbots, Andrew Miller, Oasis, Phala, Marlin, Automata, TEN.

FHE enables encrypted data processing (i.e. computation on encrypted data).

The idea of FHE was proposed in 1978 by Rivest, Adleman, and Dertouzos. “Fully” means that both addition and multiplication can be performed on encrypted data. Let m be some plain text and E(m) be an encrypted text (ciphertext). Then additive homomorphism is E(m_1 + m_2) = E(m_1) + E(m_2) and multiplicative homomorphism is E(m_1 * m_2) = E(m_1) * E(m_2).

Additive Homomorphic Encryption was used for a while, but Multiplicative Homomorphic Encryption was still an issue. In 2009, Craig Gentry came up with the idea to use ideal lattices to tackle this problem. That made it possible to do both addition and multiplication, although it also made growing noise an issue.

How FHE works:

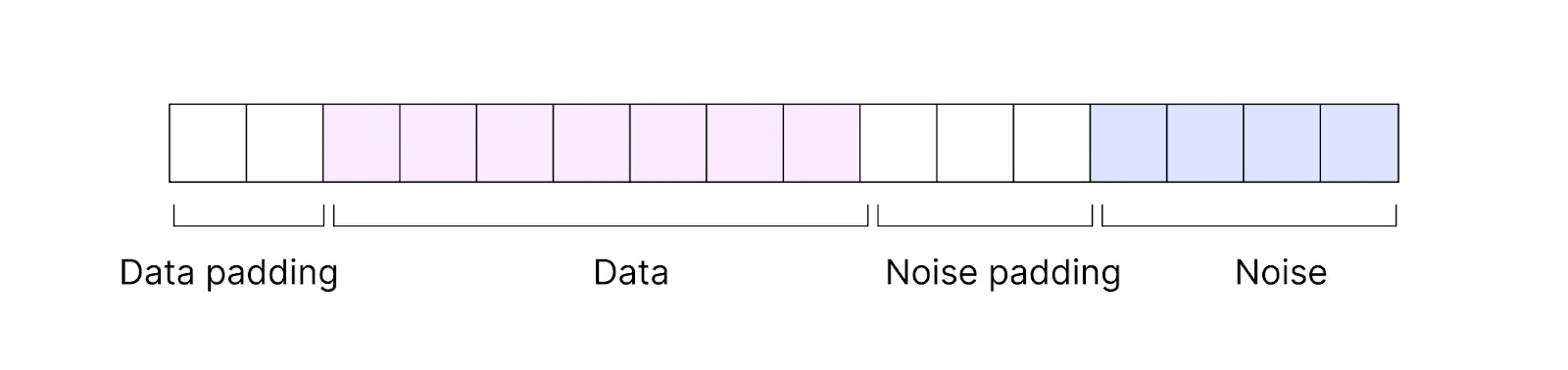

Plain text is encoded into ciphertext. Ciphertext consists of encrypted data and some noise.

That means when computations are done on ciphertext, they are done not purely on data but on data together with added noise. With each performed operation, the noise increases. After several operations, it starts overflowing on the bits of actual data, which might lead to incorrect results.

A number of tricks were proposed later on to handle the noise and make the FHE work more reliably. One of the most well-known tricks was bootstrapping, a special operation that reset the noise to its nominal level. However, bootstrapping is slow and costly (both in terms of memory consumption and computational cost).

Researchers rolled out even more workarounds to make bootstrapping efficient and took FHE several more steps forward. Further details are out-of-scope for this article, but if you’re interested in FHE history, check out this talk by mathematician Zvika Brakerski.

Core FHE concerns:

Compared to computations on plain text, the best per-operation overhead available today is polylogarithmic [GHS12b] where if n is the input size, by polylogarithmic we mean O(log^k(n)), k is a constant. For communication overhead, it’s reasonable if doing batching and unbatching of a number of ciphertexts but not reasonable otherwise.

For evaluation keys, key size is huge (larger than ciphertexts that are large as well). The evaluation key size is around 160,000,000 bits. Furthermore, one needs to permanently compute on these keys. Whenever homomorphic evaluation is done, you’ll need to access the evaluation key, bring it into the CPU (a regular data bus in a regular processor will be unable to bring it), and make computations on it.

If you want to do something beyond addition and multiplication—a branch operation, for example—you have to break down this operation into a sequence of additions and multiplications. That’s pretty expensive. Imagine you have an encrypted database and an encrypted data chunk, and you want to insert this chunk into a specific position in the database. If you’re representing this operation as a circuit, the circuit will be as large as the whole database.

In the future, FHE performance is expected to be optimized both on the FHE side (new tricks discovered) and hardware side (acceleration and ASIC design). This promises to allow for more complex smart contract logics as well as more computation-intensive use cases such as AI/ML. A number of companies are working on designing and building FHE-specific FPGAs (e.g. Belfort).

“Misuse of FHE can lead to security faults.”

What can be done with FHE today:

Note: In all of these examples, we are talking about plain FHE, without any MPC or ZK superstructures handling the core FHE issues.

Whom to follow for FHE updates: Zama, Sunscreen, Zvika Brakerski, Inco, FHE Onchain.

As we can see from the technology overview, these technologies are not exactly interchangeable. That said, they can complement each other. Now let’s think. Which ones should be combined, and for what reason?

Disclaimer: Each of the technologies we are talking about is pretty complex on its own. The combinations of them we discuss below are, to a large extent, theoretical and hypothetical. However, there are a number of teams working on combining them at the time of writing (both research and implementation).

In this section, we mostly describe two papers as examples and don’t claim to be exhaustive.

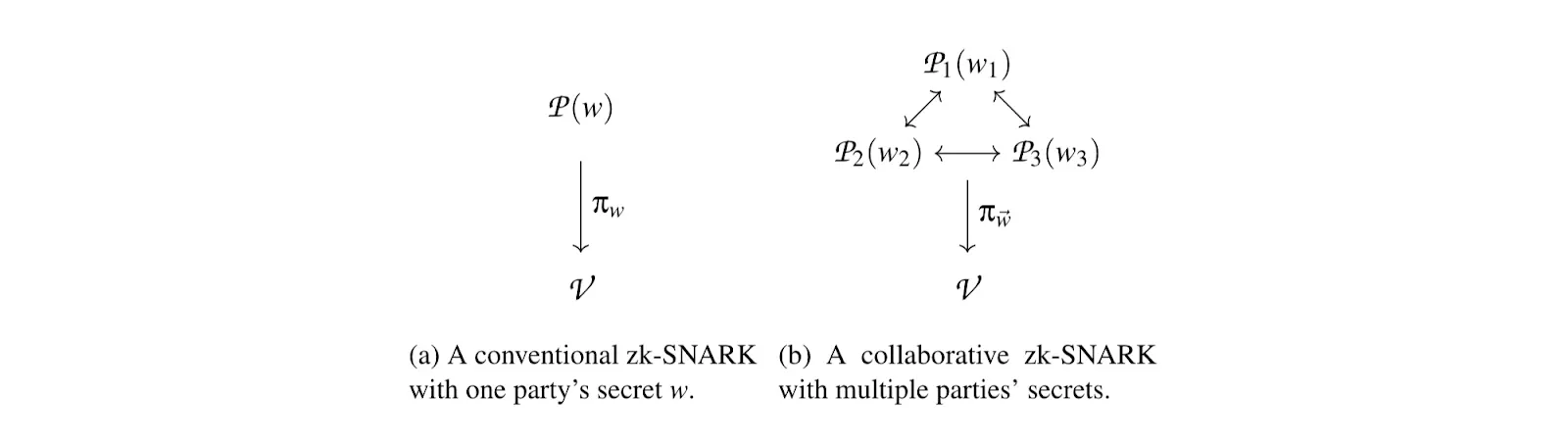

One of the possible applications of ZK-MPC is a collaborative zk-snark. This would allow users to jointly generate a proof over the witnesses of multiple, mutually distrusting parties. The proof generation algorithm is run as an MPC among N provers where function f is the circuit representation of a zk-SNARK proof generator.

Collaborative zk-SNARKs also offer an efficient construction for a cryptographic primitive called a publicly auditable MPC (PA-MPC). This is an MPC that also produces a proof the public can use to verify that the computation was performed correctly with respect to commitments to the inputs.