Hello there!

This is the second article in a series on how to write smart contracts in Huff.

Huff is a recent creation that has rudely inserted itself into to the panoply of Ethereum smart contract-programming languages. Barely a language, Huff is more like an assembler that can manage a few guttural yelps, that could charitably be interpreted as some kind of syntax.

I created Huff so that I could write an efficient elliptic curve arithmetic contract called Weierstrudel, and reduce the costs of zero-knowledge cryptography on Ethereum. So, you know, a general purpose language that will take the world by storm at any moment…

But hey, I guess it can be used to write an absurdly over-optimised ERC20 contract as well.

So let’s dive back into where we left off.

Prerequisites

- Part 1 of this series

- Knowledge of Solidity inline assembly

- A large glass of red wine. Huff’s Ballmer peak is extremely high, so a full-bodied wine is ideal. A Malbec or a Merlot will also balance out Huff’s bitter aftertaste, but vintage is more important than sweetness here.

Difficulty rating: (medium Huff)

Getting back into the swing of things: Transfer

We left off in the previous article with the skeletal structure of our contract, which is great! We can jump right into the fun stuff and start programming some logic.

We need to implement the functionality function transfer(address to, uint256 value) public returns (bool). Here’s a boilerplate Solidity implementation of transfer

function transfer(address to, uint256 value) public returns (bool) {

balances[msg.sender] = balances[msg.sender].sub(value);

balances[to] = balances[to].add(value);

emit Transfer(from, to, value);

return true;

}

Hmm. Well, this is awkward. We need to access some storage mappings and emit an event.

Huff doesn’t have mappings or events.

…

Okay, let’s take a step back. An ERC20 token represents its balances by mapping address to an integer: mapping(address => uint) balances. But…Huff does not have types (Of course Huff doesn’t have types, type checking is expensive! Well, it’s not free, sometimes, so it had to go).

In order to emulate this, we need to dig under the hood and figure out how Solidity represents mappings, with hashes.

Breaking down a Solidity mapping

Smart contracts store data via the sstore opcode — which takes a pointer to a storage location. Each storage location can contain 32 bytes of data, but these locations don’t have to be linear (unlike memory locations, which are linear).

Mappings work by combining the mapping key with the storage slot of the mapping, and hashing the result. The result is a 32 byte storage pointer that unique both to the key being used, and the mapping variable in question.

So, we can solve this problem by implement mappings from scratch! The Transfer event requires address from, address to, uint256 value. We’ll deal with the event at the end of this macro, but given that we will be re-using the from, to, value variables a lot, we might as well throw them on the stack. What could go wrong?

Initialising the stack

Before we start cutting some code, let’s map out the steps our method has to perform:

- Increase balance[to] by value

- Decrease balance[msg.sender] by value

- Error checking

- Emit the Transfer(from, to, value) event

- Return true

When writing an optimised Huff macro, we need to think strategically about how to perform the above in order to minimise the number of swap opcodes that are required.

Specifically, the variables from and to are located in calldata, the data structure that stores input data sent to the smart contract. We can load a word of calldata via calldataload. The calldataload opcode has one input, the offset in calldata that we’re loading from, meaning it costs 6 gas to load a word of calldata.

It only costs 3 gas to duplicate a variable on the stack, so we only want to load from calldata once and re-use variables with the dup opcode.

Because the stack is a last-in-first-out structure, the first variables we load onto the stack will be consumed by the last bit of logic in our method.

The last ‘bit of logic’ we need is the event that we will be emitting, so let’s deal with how that will work.

Huff and events

Imagine we’re almost done implementingtransfer(address to, uint256 value) public returns (bool), the only minor issue is that we need to emit an event, Transfer(address indexed from, address indexed to, uint256 value).

…I have a confession to make. I’ve never written a Huff contract that emits events. Still, there’s a first time for everything yes?

Events have two types of data associated with them, topics and data.

Topics are what get created when an event parameter has the indexed prefix. Instead of storing the parameter address indexed from in the event log, the keccak256 hash of from is used as a database lookup index.

i.e. When searching the event logs, you can pull out all Transfer events that contained a given address in the from or to field. But if you look at a bunch of Transfer event logs, you won’t be able to identify which address was used as the from or to field by looking at the log data.

Our event Transfer has three topics, despite only having two indexed parameters. The event signature is also a topic (a keccak256 hash of the event string, it’s like a function signature).

Digging around in Remix, this is the event signature:

0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF. Just rolls off the tongue doesn’t it?

So we know what we need to do with indexed data — just supply the ‘topics’ on the stack. Next, how does an event log non-indexed data?

Taking a step back, there are five log opcodes: log0, log1, log2, log3, log4. These opcodes describe the number of indexed parameters in each log.

We want log3. The interface for the log3 opcode is log3(p1, p2, a, b, c). Memory p1 to p1+p2 contains the log data, and a, b, c represent the log topics.

i.e. we want log3(p1, p2, event_signature, from, to). The values from, to, event_signature are going to be the last variables consumed on our stack, so they must be the first variables we add to it at the start of our program.

Finally, we’re in a position to write some Huff code, oh joy. Here it is:

0x04 calldataload

caller0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF

Next up, we need to define the memory that will contain value. That’s simple enough — we’ll be storing value at memory position 0x00, so p1=0x00 and p2=0x20. Giving us

0x04 calldataload

caller

0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF

0x20

0x00

Finally, we need value, so that we can store it in memory for log3. We will execute the mstore opcode at the end of our method, so that we can access value from the stack for the rest of our method. For now, we just load it onto the stack:

0x04 calldataload

caller

0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF

0x20

0x00

0x24 calldataload

One final thing: we have assumed that 0x04 calldataload will map to address to. But 0x04 calldataload loads a 32-byte word onto the stack, and addresses are only 20 bytes! We need to mask the 12 most-significant bytes of 0x04 calldataload, in case the transaction sender has added non-zero junk into those upper 12 bytes of calldata.

We can fix this by calling 0x04 calldataload 0x000000000000000000000000ffffffffffffffffffffffffffffffffffffffff and.

Actually, let’s make a macro for that, and one for our event signature to keep it out of the way:

#define macro ADDRESS_MASK = takes(1) returns(1) {

0x000000000000000000000000ffffffffffffffffffffffffffffffffffffffff

and

}

#define macro TRANSFER_EVENT_SIGNATURE = takes(0) returns(1) {

0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF

}

The final state of our ‘initialisation’ macro is this:#define macro ERC20__TRANSFER_INIT = takes(0) returns(6) { 0x04 calldataload ADDRESS_MASK() caller TRANSFER_EVENT_SIGNATURE() 0x20 0x00 0x24 calldataload}

Updating balances[to]

Now that we’ve set up our stack, we can proceed iteratively through our method’s steps (you know, like a normal program…).

To increase balances[to], we need to handle mappings. To start, let’s get our mapping key for balances[to]. We need to place to and the storage slot unique to balances linearly in 64 bytes of memory, so we can hash it:

// stack state:

// value 0x20 0x00 signature from to

dup6 0x00 mstore

BALANCE_LOCATION() 0x20 mstore

0x40 0x00 sha3

In the functional style, sha3(a, b) will create a keccak256 hash of the data in memory, starting at memory index aand ending at index a+b.

Notice how our ‘mapping’ uses 0x40 bytes of memory? That’s why the ‘free memory pointer’ in a Solidity contract is stored at memory index 0x40 — the first 2 words of memory are used for hashing to compute mapping keys.

Optimizing storage pointer construction

I don’t know about you, but I’m not happy with this macro. It smells…inefficient. In part 1, when we set BALANCE_LOCATION() to storage slot 0x00, we did that on purpose! balances is the most commonly used mapping in an ERC20 contract, and there’s no point storing 0x00 in memory. Smart contract memory isn’t like normal memory and uninitialised — all memory starts off initialised to 0x00. We can scrap that stuff, leaving:

// stack state:

// value 0x20 0x00 signature from to

dup6 0x00 mstore

0x40 0x00 sha3

Note that if our program has previously stored something at index 0x20, we will compute the wrong mapping key.

But this is Huff; clearly the solution is to just never use more than 32 bytes of memory for our entire program. What are we, some kind of RAM hog?

Setting storage variables

Next, we’re going to update balances[to]. To start, we need to load up our balance and duplicate value, in preparation to add it to balances[to].

// stack state:

// key(balances[to]) value 0x20 0x00 signature from to

dup1 sload

dup3

Now, remember that MATH__ADD macro we made in part 1? We can use it here! See, Huff makes programming easy with plug-and-play macros! What a joy.

// stack state:

// key(balances[to]) value 0x20 0x00 signature from to

dup1 sload

dup3MATH_ADD()

…wait. I said we could use MATH__ADD, not that we were going to. I don’t like how expensive this code is looking and I think we can haggle.

Specifically, let’s look at that MATH__ADD macro:

template #define macro MATH__ADD = takes(2) returns(1) { // stack state: a b dup2 add // stack state: (a+b) a dup1 swap2 gt // stack state: (a > (a+b)) (a+b) jumpi }

Remember how, well, optimised, our macro seemed in part 1? All I see now is a bloated gorgon feasting on wasted gas with opcodes dribbling down its chin. Disgusting!

First off, we don’t need that swap2 opcode. Our original macro consumed a from the stack because that variable wasn’t needed anymore. But… a is uint256 value, and we do need it for later — we can replace dup1 swap2 with a simple dup opcode.

But there’s a larger culprit here that needs to go, that jumpi opcode.

Combining error codes

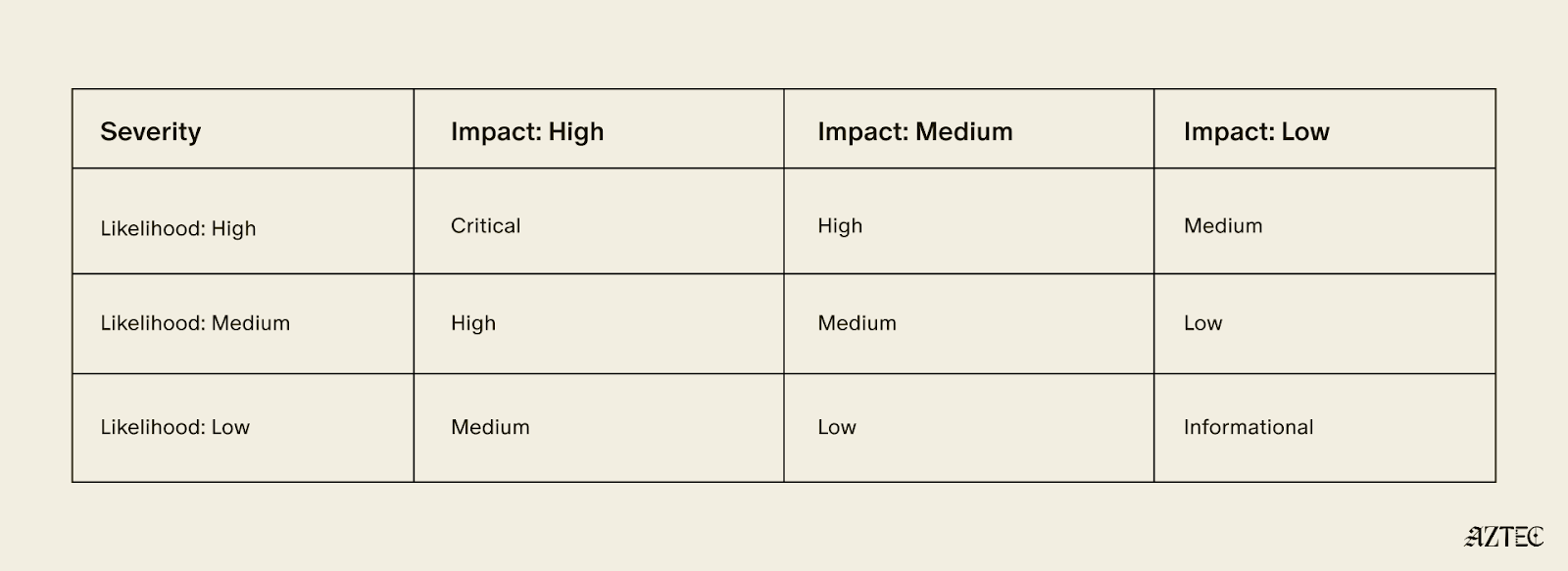

The transfer function has three error tests it must perform: the two safemath checks when updating balances[from] and balances[to], and validating that callvalue is 0.

If we were to implement this naively, we would end up with three conditional jump instructions and associated opcodes to set up jump labels. That’s 48 gas. Quite frankly, I’d rather put that gas budget towards another Malbec, so let’s start optimising.

Instead of directly invoking a jumpi instruction against our error test, let’s store it for later. We can combine all of our error tests into a single test and only onejumpi instruction. Putting this into action, let’s compute the error condition, but leave it on the stack:

// stack state:

// key(balances[to]) value 0x20 0x00 signature from to

dup1 sload // balances[to]

dup3 // value balances[to]

add // value+balances[to]

dup1 // value+balances[to] value+balances[to]

dup4 // value v+b v+b

gt // error_code value+balances[to]

Finally, we need to store value+balances[to] at the mapping key we computed previously. We need key(balances[to]) to be in front of value+balances[to], which we can perform with a simple swap opcode:

#define macro ERC20__TRANSFER_TO = takes(6) returns(7) {

// stack state:

// value 0x20 0x00 signature from to

dup6 0x00 mstore

0x40 0x00 sha3

dup1 sload // balances[to] key(balances[to]) ...

dup3 // value balances[to] ...

add // value+balances[to] ...

dup3 // value value+balances[to] ...

gt // error_code value+balances[to] key(balances[to])

swap2 // key(balances[to]) value+balances[to} error_code

sstore // error_code ...

}

And that’s it! We’re done with updating balances[to]. What a breeze.

Updating balances[from]

Next up, we need to repeat that process for balances[from], pillaging the parts of MATH__SUB that are useful.

#define macro ERC20__TRANSFER_FROM = takes(7) returns(8) {

// stack state:

// error_code, value, 0x20, 0x00, signature, from, to

caller 0x00 mstore

0x40 0x00 sha3

dup1 sload // balances[from], key(balances[from]), error_code,...

dup4 dup2 sub // balances[from]-value, balances[from], key, e,...

dup5 swap3 // key, balances[from]-value, balances[from], value

sstore // balances[from], value, error_code, value, ...

lt // error_code_2, error_code, value, ...

}

Now that we have both of our error variables on the stack, we can perform our error test! In addition to the two error codes, we want to test whether callvalue> 0. We don’t need gt(callvalue,0) here, any non-zero value of callvalue will trigger our jumpi instruction to jump.

callvalue or or jumpi

What an adorable little line of Huff.

Emitting Transfer(from, to, value)

The penultimate step in our method is to emit that event. Our stack state at this point is where we left it after ERC20__TRANSFER_INIT, so all we need to do is store value at memory index 0x00 and call the log3 opcode.

// stack state:

// value, 0x20, 0x00, event_signature, from, to

0x00 mstore log3

Finally, we need to return true (i.e. 1). We can do that by storing 0x01 at memory position 0x00 and calling return(0x00, 0x20)

Putting it all together…

At long last, we have our transfer method! It’s this…thing

#define macro OWNER_LOCATION = takes(0) returns(1) {

0x01

}

#define macro ADDRESS_MASK = takes(1) returns(1) {

0x000000000000000000000000ffffffffffffffffffffffffffffffffffffffff

and

}

#define macro TRANSFER_EVENT_SIGNATURE = takes(0) returns(1) {

0xDDF252AD1BE2C89B69C2B068FC378DAA952BA7F163C4A11628F55A4DF523B3EF

}

#define macro ERC20 = takes(0) returns(0) {

caller OWNER_LOCATION() mstore

}

#define macro ERC20__TRANSFER_INIT = takes(0) returns(6) {

0x04 calldataload ADDRESS_MASK()

caller

TRANSFER_EVENT_SIGNATURE()

0x20

0x00

0x24 calldataload

}

#define macro ERC20__TRANSFER_GIVE_TO = takes(6) returns(7) {

// stack state:

// value, 0x20, 0x00, signature, from, to

dup6 0x00 mstore

0x40 0x00 sha3

dup1 sload // balances[to], key(balances[to]), ...

dup3 // value, balances[to], ...

add // value+balances[to], ...

dup1 // value+balances[to], value+balances[to], ...

dup4 // value value+balances[to] ...

gt // error_code, value+balances[to], key(balances[to])

swap2 // key(balances[to]), value+balances[to}, error_code

sstore // error_code, ...

}

#define macro ERC20__TRANSFER_TAKE_FROM = takes(7) returns(8) {

// stack state:

// error_code, value, 0x20, 0x00, signature, from, to

caller 0x00 mstore

0x40 0x00 sha3

dup1 sload // balances[from], key(balances[from]), error_code,...

dup4 dup2 sub // balances[from]-value, balances[from], key, e,...

dup5 swap3 // key, balances[from]-value, balances[from], value

sstore // balances[from], value, error_code, value, ...

lt // error_code_2, error_code, value, ...

}

template #define macro ERC20__TRANSFER = takes(0) returns(0) { ERC20__TRANSFER_INIT() ERC20__TRANSFER_TO() ERC20__TRANSFER_FROM() callvalue or or jumpi 0x00 mstore log3 0x01 0x00 mstore 0x20 0x00 return }

Wasn’t that fun?

I think that’s a reasonable place to end this article. In the next chapter, we’ll deal with how to handle ERC20 allowances in a Huff contract.

Cheers,

Zac.